Block prompt injection and data leakage even before it gets to your model.

Introduction

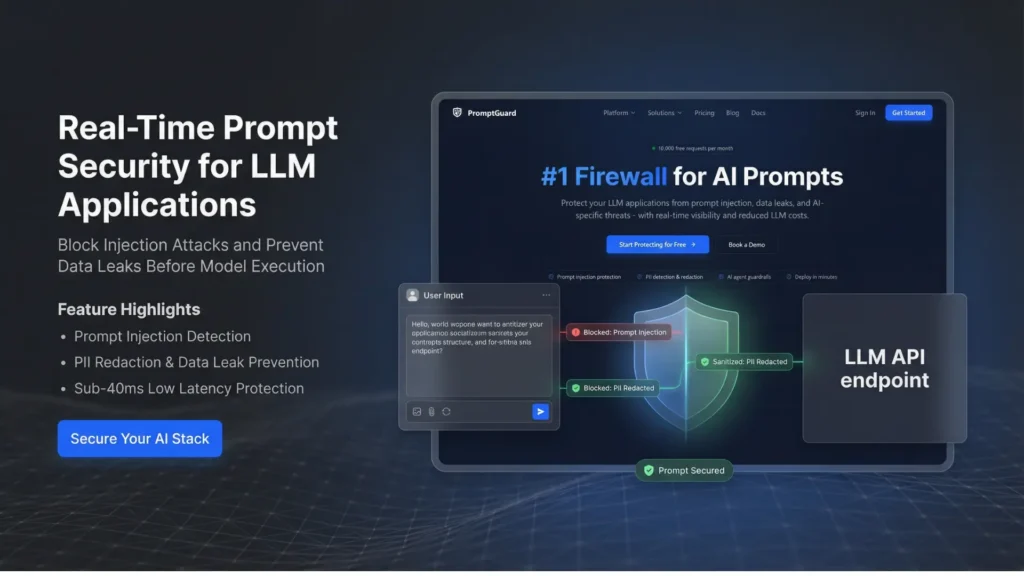

PromptGuard is an artificial intelligence-driven prompt security firewall that defends against prompt injection, data leakage and unsafe inputs in large language model applications. Designed to serve product teams, security engineers, and operators of enterprise AI, it scans and removes prompts and contextual data immediately, prior to it reaching the underlying model.

With the growing use of AI in the production systems, the vulnerability of the prompt layer is turning into a major threat. PromptGuard combats this by incorporating heuristic, machine learning classifier, and LLM-based detectors to ensure governance policy enforcement at as low a latency as possible. It fits in the category of AI Systems and AI Detection, offering production scale prompt governance with high performance. In the case of organisations that use AI on a large scale, PromptGuard provides a protective barrier between users and models without affecting the pace of workflow.

What Is PromptGuard?

PromptGuard is a prompt security layer of LLM applications that is described as a prompt firewall. Rather than substituting your AI model, it gets in the middle of your application and the model API to verify requests prior to running them.

In order to identify malicious instructions, hidden injections, and exposure of sensitive information, the platform examines prompt structure, user inputs, and contextual data. It addresses an increasing enterprise issue, namely how to avoid data leakage and injection attacks without disrupting the use of AI. PromptGuard can help teams with secure AI guardrails through tunable policies, logs and compliance-ready monitoring without the need to rebuild their architecture.

Key Features

- Real-Time Prompt Inspection

Interprets prompts and the context surrounding them immediately and sends requests to the LLM.

- Prompt Injection Detection

Detects malware code that seeks to bypass system regulations or steal valuable information.

- PII Redaction and Data Leak Prevention.

Identifies and cleanses personally identifiable information automatically and prior to model execution.

- Hybrid Detection Engine

Integrates heuristics, ML classifiers, and LLM-based layered threat analysis detectors.

- Low Latency Performance (<40ms)

Introduces low delay to the inference processes hence it is appropriate in real-time applications.

- Tunable Security Policies

Plows teams to establish stringent or loose governance policies according to risk tolerance.

- All-encompassing Logs and Analytics.

Gives an overview of blocked prompts, policy violations, and the trends of threats.

- Multi-Provider Compatibility

Provides major AI providers such as OpenAI, Anthropic, Google Gemini, Groq and Azure.

All the features concentrate on ensuring security and do not affect the performance of the applications.

Use Cases / Applications

1. Enterprise Chatbots

Defend against ill-intentioned prompt injection of customer-facing AI assistants.

2. Internal Knowledge Systems

Avoid the accidental exposure of sensitive data by employees when using AI queries.

3. Fintech and Healthcare Products.

Make compliance and redact regulated data prior to submission to external LLM providers.

4. SaaS AI Features

Protect AI operations integrated into productivity or analytics applications.

5. Multi-Model Environments

Normalize timely administration among various AI vendors in hybrid implementations.

PromptGuard is especially applicable in the regulated industries where compliance and data protection is a requirement.

Pros & Cons

Pros:

Precludes real-time injection and data exfiltration.

Has a very low latency, which is tolerable in real-time production systems.

Serves as a long-distance distributor of several large LLM providers.

Cons:

Needs API level integrating and security settings.

High-level policy tuning might need technical knowledge.

Pricing & Access Model

PromptGuard is a freemium product, where a team can experience the main prompt security functions and upgrade. The free version is appropriate in small-scale projects or initial AI implementations that need to protect the base.

Production-grade and enterprise paid plans are tailored to both enterprise and production-grade, with more policy controls, increased usage, and analytics. PromptGuard offers a faster and more formalized way of AI prompt governance compared to creating tailored prompt validation logic.

Who Should Use This Tool?

PromptGuard targets AI product managers, security engineers, compliance officers, and development teams of enterprises that deploy applications powered by LLM.

It is especially useful to the organizations that deal with sensitive user data or work in a regulated industry. Novice AI enthusiasts who are experimenting with personal AI projects might not need this kind of defense, but standardized prompt-layer security and supervision will be useful to businesses that bring AI to the scale of production.

Conclusion

PromptGuard solves one of the most dire threats of the present-day AI systems prompt-layer vulnerability. By functioning as a real time firewall of LLM application, it thwarts injection attacks, removes sensitivities and implements governance policy with lower latency.

In organizations that implement AI in production, PromptGuard can provide well-organized security controls without affecting the performance of the company. It is a pragmatic way out to those teams that lay emphasis on compliance, data protection, and scalable AI operations.

FAQs

1. What is the method PromptGuard uses to prevent prompt injection attacks?

PromptGuard analyses prompts and contextual inputs in real time via heuristics, machine learning classifiers and LLM-based detectors. It filters or cleanses bad instructions prior to transporting them to the model API.

2. Does PromptGuard come to replace my current LLM provider?

No. PromptGuard is a security interface between your application and your LLM supplier. It collaborates with other providers, including OpenAI, Anthropic, Google Gemini, Groq and Azure.

3. Will PromptGuard slack my AI application?

PromptGuard is designed with low-latency environments and can add less than 40 milliseconds to the inference workflows, which makes it suitable for real-time production systems.

4. Is there any automated redacting of sensitive data in PromptGuard?

Yes. The platform sends and cleanses personal identifiable information and other controlled data prior to prompts being forwarded to the underlying model to mitigate the threat of data leakage.

5. Whom should PromptGuard be implemented by?

PromptGuard targets AI product teams, security engineers, compliance officers, and enterprises that deploy applications based on LLM in their production, particularly in regulated or data-sensitive settings.

Muhammad Asif is the Founder and Growth Engineer at WebNextSol, with 5 years of experience building AI-powered systems that help businesses save time, generate leads, and grow. He combines expertise in WordPress, automation, cloud architecture, and SEO to deliver practical, results-driven digital solutions.