AI, guardrail, and production-to-dev Compliance: Automate AI risk detection, guardrails, and compliance.

Introduction

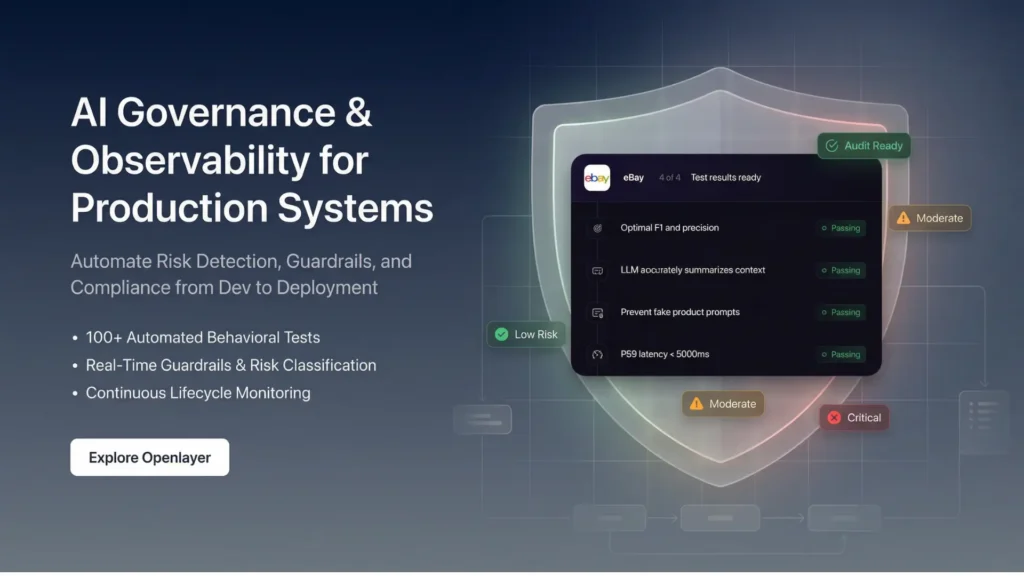

Openlayer is an AI governance and observability platform designed in such a way that machine learning and large language model systems are safe, dependable, and compliant. Created to facilitate AI product teams and compliance officers as well as enterprise engineering teams, it will automatically execute over 100 behavioral tests to uncover potential scenarios of risks including hallucinations, bias, PII leakage, and toxicity.

With organizations scaling AI to production it is equally as important to exercise oversight as it is to performance. Openlayer responds to this requirement by integrating automated testing, rate of risk classification and real-time guardrails in a single layer of governance. It falls under the AI Systems and AI Detection category and is useful in ensuring that teams remain visible and in control of the AI lifecycle. Since development up to deployment, Openlayer builds trust by detecting and avoiding any problem before it affects users or regulatory positions.

What Is Openlayer?

Openlayer is an AI governance platform, production-oriented that keeps analyzing models in terms of safety, compliance, and reliability. Instead of providing static evaluations it is built into development pipelines and live systems to ensure continued monitoring and enforcement.

The platform applies AI-based behavioral testing to create real-life conditions and stress-test models. It detects weaknesses like hallucinated output, biased output or unwanted disclosure of sensitive information. The Openlayer is a solution to a major enterprise dilemma, namely, the ability to scale AI innovation and have an organized control over it. Through governance automation and instant guardrails, it transforms experimental AI deployments into managed, audit-ready systems.

Key Features

- Behavioral Testing (Automated).

Conducts more than 100 predefined tests to measure model safety, bias, risk of hallucinations as well as compliance gaps.

- Toxicity Detection and Hallucination.

Early detects unreliable or unsafe outputs before they are sent to the end users.

- PII Leakage Monitoring

Identifies and raises warning against the exposure of personally identifiable information in model responses.

- Bias and Fairness Analysis

Determines outputs on discriminatory patterns on demographic variables.

- Real-Time Guardrails

Imposes runtime safeguards to forestall immediate injection, malevolent reaction and policy infringement.

- Automation of Risk Classification.

Classifies model behavior into prescribed levels of risk to facilitate easier reporting of governance.

- Integration of Compliance Workflow.

Facilitates formal record keeping and reporting in line with regulatory demands.

- Lifecycle Observability

Delivers end to end monitoring through model development to real production.

These characteristics integrate testing, monitoring and enforcement in a single governance structure.

Use Cases / Applications

1. Enterprise AI Deployment

Ensure standards of compliance and security throughout customer AI applications.

2. Regulated Industries

Encourage fintech, healthcare, and legal organizations in terms of organized control and audit preparedness.

3. Artificial Intelligence Product Development Group.

Implement a testing automation process in the CI/CD pipelines to detect risks early.

4. Internal AI Tools

Supervise AI-based systems that face employees in order to avoid discrimination or privacy breaches.

5. Multi-Model Environments

Make governance policies uniform among various providers of LLM and ML.

Openlayer especially proves useful in situations where regulatory oversight and brand loyalty are considered important.

Pros & Cons

Pros:

Automatically conducts more than 100 behavioral tests, eliminating manual governance overhead.

Discusses various categories of risk, such as hallucination, bias, and PII leakage.

Bridges development and production in order to be continuously observable.

Cons:

Mainly aimed at groups within enterprises and not at individual developers.

Needs to be implemented in a structured way within the current AI processes.

Pricing & Access Model

Openlayer is based on a paid SaaS experience but offers a free trial that can be tested. The test run allows teams to evaluate the capacity to do automated testing and governance reporting prior to full implementation.

Paid levels are arranged according to startups, mid-market businesses, and enterprises with production AI systems. Pricing normally scales according to the usage volume, number of models followed and the compliance feature required. In comparison with the development of internal governing infrastructure, Openlayer offers a consolidated solution, which is dedicated to AI risk identification and compliance automation specifically.

Who Should Use This Tool?

Openlayer would be the most appropriate among AI product managers, machine learning engineers, compliance officers, and enterprise security teams. The most suitable use of structured testing and monitoring features of AI will be in organizations that implement it in customer-facing or regulated settings.

The initial startups that are in their initial phases and working on prototypes might not need a full automation of governance. Nonetheless, companies that move between pilot project and production systems will consider Openlayer useful in ensuring oversight, mitigation of risk, and in preparing audits.

Conclusion

Openlayer provides behavioral testing and guardrails are automated to provide structured AI governance and observability. It addresses the issues of hallucinations, bias, PII leakage, and compliance workflows, allowing teams to scale AI systems in a responsible manner.

Although it needs to be incorporated into the development and production pipelines to be implemented, the platform offers quantifiable oversight and audit preparedness. Openlayer is a specialized and universal governance platform to businesses that care about AI safety and compliance with regulations.

FAQs

1. What are the threats that Openlayer is going to expose?

Openlayer identifies hallucinations, bias, toxicity, PII leakage, and safety violations, and other behavior risk, by means of automated testing and real-time monitoring using the AI lifecycle.

2. When being developed alone, do openlayer test models?

No. Openlayer is incorporated in the development pipelines and live production environment and this implies that it provides observability and enforcement on the continuous assessment as opposed to a one-time assessment.

3. Does Openlayer come in handy with regulatory compliance?

Yes. The platform aids in formal classification, reporting procedures, and documentation procedures which help organizations to be audit ready and regulatory review.

4. Does Openlayer deal with multiple providers of LLM?

Openlayer is compatible with other machine learning andLLM systems and, therefore, can be viewed as a multi-model and hybrid AI environment.

5. Who will be the implementers of Openlayer?

Openlayer fits best with AI product teams, ML engineers, compliance officers and enterprise organizations that have regulated or customer-facing production objectives that are deploying AI.

Muhammad Asif is the Founder and Growth Engineer at WebNextSol, with 5 years of experience building AI-powered systems that help businesses save time, generate leads, and grow. He combines expertise in WordPress, automation, cloud architecture, and SEO to deliver practical, results-driven digital solutions.