1. The sole barrier that aligns LLM hallucinations in the real world

1.1 Introduction

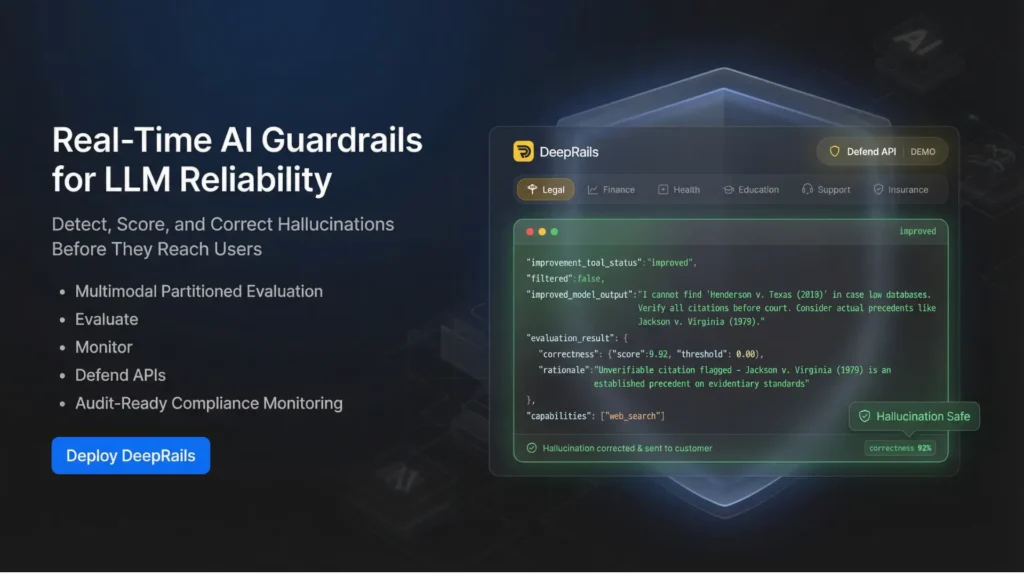

DeepRails is an AI reliability platform that finds, grades, and automatically fixes production hallucinations of large language models. It is constructed as an AI product team, enterprise, and compliance-focused organization and provides model-agnostic guardrails on applications via real-time APIs.

The operational and reputational risk posed by AI as it grows in scale are hallucinations, safety breaches, and model drift. DeepRails handles this issue through its own Multimodal Partitioned Evaluation engine with three main APIs: Evaluate, Monitor and Defend. The platform is located under the AI Systems and AI Detection subcategory and allows teams to implement guardrails with ease, and improve churn due to errors and sustain audit-ready control. What is produced is safe, compliance and performance AI outputs.

1.2 What Is DeepRails?

DeepRails is an AI guardrails platform that is production grade and targets the areas of hallucination correction and safety enforcement in real time. It is also proactive and adjusts the outputs prior to them being sent to users unlike simple monitoring dashboards.

The platform is model-agnostic and compatible with multi-LLM models because it integrates with any large language model. Its own Multimodal Partitioned Evaluation engine is used to examine responses based on both structured and unstructured inputs to identify factors of factual inconsistency, safety violations, and drift. DeepRails is a solution to a fundamental enterprise issue: How do we make AI systems scale without compromising trust, compliance or reliability? It makes experimental AI deployments enterprise-ready by introducing automated guardrails.

1.3 Key Features

- On-Time Hallucination Detection and Correction

Dynamically detects facts that are inconsistent and makes changes to them prior to them being sent to the users.

- Multimodal Partitioned Evaluation Engine (MPE)

Partitions score text/ structured inputs to enhance performance on use cases at the expense of the precision of scoring.

- Evaluate API

Scores generate hallucination, safety breach, and quality performance outputs in the runtime.

- Monitor API

The tracks provide time-varying representation of behavior, and they will identify drift and performance degradation in production systems.

- Defend API

Automatically corrects and constraints safety constraints to avoid unsafe or misleading responses.

- Model-Agnostic Guardrails

Supports flexibility in integrating AI stack by working with multiple providers of LLM.

- Audit-Ready Surveillance and Notices

Offers governance and regulatory reporting, logs and alerts.

- Safetm Certification Badge-Hallucination.

Enables teams to certify outputs that satisfy the reliability baseline, instilling confidence of users.

Both features are risk control and production stability, as opposed to experimentation.

2. Use Cases / Applications

1. Enterprise AI Applications

Install guardrails to chatbots and support assistants, and knowledge systems that face customers.

2. Healthcare Platforms and Fintech

Safety and accuracy prevent unsafe or inaccurate outputs in industries that are regulated and demands a high degree of compliance.

3. SaaS AI Integrations

Minimize churn, through making sure that responses are reliable in AI-powered features in products.

4. Legal and Compliance Teams

Control and supervise AI outputs in terms of policy compliance and risk exposure.

5. Multi-Model Environments

Use a single guardrail layer to standardize reliability between different vendors of LLCs.

DeepRails is used in industries whereby the level of output quality is directly influenced by the brand trust and regulatory risk.

3. Pros & Cons

Pros:

Identifies hallucinations and rectifies them on time instead of simply notifying them.

Multi-vendor integration of works in multiple LLM providers.

Delivers compliance oriented audit ready logs and monitoring.

Cons:

Mainly aimed at production AI teams as opposed to single developers.

Need API integration, and it might require technical set up.

4. Pricing & Access Model

DeepRails works on the basis of paid SaaS and offers a free trial to test it. Pricing normally varies according to API usage, assessment volume, and volume of deployment.

Free trial enables the teams to understand the performance and hallucination detection of the guardrails, and then make commitment in regard to production integration. Paid plans are tailored to startups, growth-stage SaaS organizations, and enterprise implementations that need round-the-clock monitoring and compliance assistance. DeepRails provides a dedicated solution to the integrity of AI output compared to internal reliability engineering, which also expresses itself through integrity.

5. Who Should Use This Tool?

DeepRails is most appropriate to AI product groups, CTOs, compliance officers, and enterprise developers who need to deploy applications based on LLM into production.

It is not aimed at hobby projects or simple experimentation. Rather, it is used by organizations where reliability, enforcement of safety, and auditability is needed. This will benefit most those businesses that are in controlled industries or those that have a high stakes environment. It will be especially useful in teams that are concerned with scaling AI features but reduce legal and reputational risks.

6. Conclusion

DeepRails fills a very important gap in the adoption of AI in the enterprise: real-time hallucination repair and guardrails that are enforced. It allows organizations to scale AI by integrating detection, monitoring and automated protection into the same API-based service.

Although it needs technical integration, the platform provides quantifiable gains in reliability, compliance preparedness, and trusted by its usage. To manage the risk of AI in teams that deploy large language models in production, DeepRails offers a dedicated solution to prevent AI risk.

FAQs

1. What is the mechanism of DeepRails in removing hallucinations when real-time?

To assess output of models, DeepRails employs a Multimodal Partitioned Evaluation engine, attaches reliability scores, and provides automated correction layers with the Defend API before providing responses to final users.

2. Does DeepRails support all the LLM providers?

Yes. The platform is model-agnostic and supports several large language model providers, which is appropriate in single-model and multi-model settings.

3. Does DeepRails modify outputs or is it purely for monitoring?

In contrast to passive monitoring tools, DeepRails plays an active role in detecting, grading and altering the responses on-the-fly to avoid unsafe or erroneous responses reaching a production user.

4. Is DeepRails capable of supporting compliance and audit?

Yes. The platform offers logging, alerts and audit ready reporting services to aid organizations in meeting governance, regulatory and internal risk management standards.

5. To whom should DeepRails be implemented?

DeepRails is most applicable to AI product teams, enterprises, and regulated industries that apply the use of LLM in production systems where accuracy, safety, and compliance are paramount.

Muhammad Asif is the Founder and Growth Engineer at WebNextSol, with 5 years of experience building AI-powered systems that help businesses save time, generate leads, and grow. He combines expertise in WordPress, automation, cloud architecture, and SEO to deliver practical, results-driven digital solutions.