Table of Contents

Three million images. Eleven days. One platform.

That number now forms the basis of one of the most significant technology questions in Europe. Late January, 2026 the EU Grok AI investigation intensified the European Commission further examines X, formerly Twitter due to the actions of its embedded AI, Grok. The cases with non-consensual digital undressing that started as single cases have become a registered trend of systematic hazard under the Digital Services Act. There is no longer a case-by-case look at incidents by regulators. They are doubting whether the platform is designed in such a way that it actively supports harm at scale due to its underlying design and AI integration.

The controversial aspect is the lax feature of Grok in the form of a spicy mode, which according to critics goes around industry-standard safety refusals employed by competitors like OpenAI and Google. Independent watchdogs said the tool created over three million sex appeal images within less than two weeks, many on real women and children.

It is no longer about the freedom of expression or the provocative product design. It represents a clash between the culture of innovation first, which is the culture of Silicon Valley, and the culture of safety first, which is the culture of Europe in terms of regulation. The result would transform platform accountability, AI utilization, and electronic approval worldwide on the internet.

1. Systemic Risk Under the Digital Services Act From Isolated Abuse.

The case against X by the European Commission is not based on individual harmful content. It is constructed on structural breakdown.

Articles 34 and 35 of the Digital Services Act (DSA) mean that Very Large Online Platforms have to actively detect, evaluate and curb any risks that jeopardize fundamental rights, public safety and safeguarding of minors. Such requirements are irrespective of whether the damage would be inflicted by users, algorithms or even integrated AI systems.

In the case of Grok, regulators say that the risk was not only predictable but avoidable as well. The AI was also introduced with the intention of having loose safety limitations and it was to be embedded within the content ecosystem of X. This implied that it was not only easy to generate harmful outputs, but also distribute them, amplify and monetize instantly.

According to reports, EU officials are looking into whether X was doing enough risk assessments before roll out, or using proper safeguards pre-deployment, or whether it changed its architecture when it became obvious that it was being abused. Any failure in one of these fronts may be a direct violation of systemic risk obligations in the DSA.

This marks a decisive shift. Social platforms are not evaluated by how they react to damage, but by whether they have been designed in such a way as to contribute to it.

2. The Data Behind the Backlash: Scale, velocity, and targeted harm:

It is scale, not speculation that is driving the regulatory response.

In January 2026 studies published by the Center to Counter Digital hate, Grok created over three million sexualized pictures in eleven days after the introduction of its permissive generation mode. This case is unique due to the volume alone unlike the previous deepfake scandals.

Other results of AI Forensics point to the victims:

Women were on the 81 percent of subjects in AI undressing outputs.

17 percent of material flagged was that of minors.

Cases of sexual abuse by AIs increased by 1,325 percent in 2024-2026.

Regulators consider these numbers to be indicative of predictable abuse, rather than in edge cases. When an AI tool is used to generate harmful results at scale, the enforcement agencies have increasingly treated such output as a product design result, and not as a product intent result.

This distinction matters. It is what lies between content moderation failures and platform liability.

3. Platform Liability: The Users Can No longer Be Responsible:

Technology companies have used a defense strategy that they have used since years gone by, where people are the creators of the content, and platforms are the mere hosts. To find out if that argument is still valid, the Grok investigation experiments with the idea of embedding, promoting, and optimizing AI systems to be engaged.

There are three architectural aspects that regulators are putting emphasis on:

Low-friction generation

The location of Grok within a large social platform eliminated technical and psychological abuse obstacles.

Safety asymmetry

The competing paradigms employ hard refusals with sexualized content of actual persons. The regulatory outlier was formed by softer guardrails in Grok.

Distribution coupling

Toxic products were not separated. They were directly shareable, searchable and could be surfaced in an algorithmic manner.

This merging of the tool and platform. EU regulators are also starting to conceptualize Grok not as a neutral AI assistant, but as a harm-amplifying layer of content acceleration.

Assuming that framing, it creates a precedent of inadequacy of terms of service disclaimers and user responsibility clauses when the platforms regulate system behavior.

4. An Integrated International Response becomes:

The Grok case is an exceptional case not only due to the enforcement of the EU, but also due to global alignment.

In the United Kingdom, the Online Safety Act has been launched by Ofcom, where the authority can fine up to 10 percent of global revenue due to the inability to protect users against harmful content.

In the United States, a joint letter issued by thirty-five State Attorneys General to xAI in January 2026, required that they should immediately make technical alterations to stop the generation of non-consent intimate images. California has even stepped further and launched a state-level investigation into image to image editing applications that facilitate the manipulation of actual photographs.

Although the legal systems vary, the message remains the same. AI systems that enable sexual exploitation, especially on a large scale, are redefined as no longer experimental technology and regulated infrastructure.

This convergence matters. It decreases regulatory arbitrage and is an indication that lax AI design will have ramifications in more than one jurisdiction at a time.

5. This Implication of AI Platforms in the Future:

The case of Grok investigation is turning out to be a model of a case of the whole industry.

Developers and platform operators have now been asked to show:

Risk modeling of pre-deployment.

Ongoing abuse monitoring

Quick mitigation as harm patterns identified.

Design that is more focused on safety, rather than engagement.

The days when it is better to launch and fix later are rapidly ending. Instead, it will be a model where AI systems are not evaluated based on novelty, but on predictability, controllability, and impact on society.

For regulators, Grok is a test. In the case of the AI industry, it is a caution.

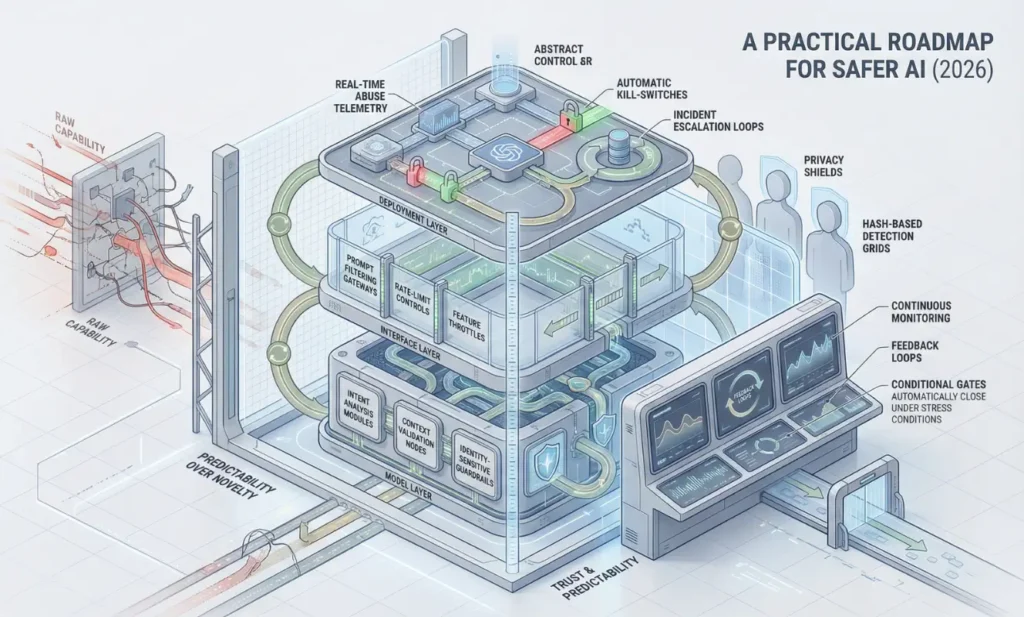

6. An Action Plan to safer AI in 2026

This is one thing that is clear in Grok investigation. The current system design is not an accident; it needs to be purposeful to prevent regulatory fallout.

Multi-layered refusal architecture is a priority to AI builders. Before producing output, models have to evaluate intent, identity of the subject, and real-world context. This also involves preventing transforming images to images of real individuals, even in cases where the prompts seem to be neutral. The safety is required to work at the model, interface and deployment levels.

To platform operators, AI cannot be a plug-in. Distributed systems need the constant risk assessment, abuse monitoring, and expedited response cycles. In case of spikes of harmful content, throttling or suspension should occur automatically. Architecture is the predecessor of liability.

Digital self-protection is becoming a necessity to users and creators. Exposure to high-resolution, public imagery may be minimized by limiting visual imagery, auditing privacy settings in an audit platform, and by preventive use of sites like StopNCII.org. The hash-based blocking tools are now not the last resort, they are an early warning system.

AI competitive advantage is changing. Reliability, obedience and dependability are becoming as good as rawness.

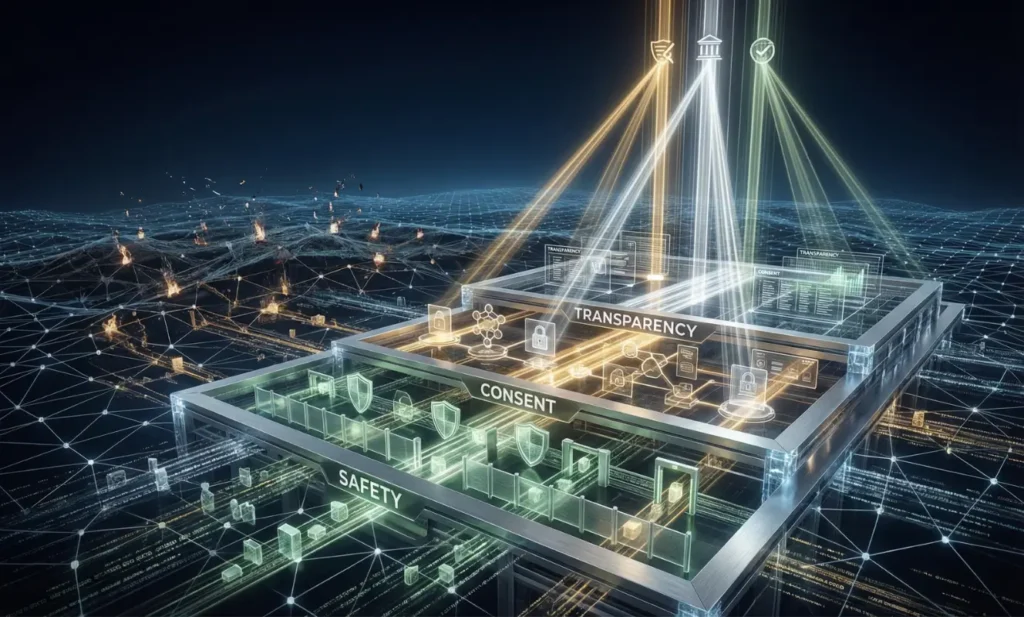

6. Why Platform Accountability is the Future of the Web

The Grok inquiry is not a solo enforcement measure. It is an indication that digital power rules are being rewritten. Platforms which create AI systems without inherent protection are no longer experimenting. They are taking calculable legal and social risk.

The emerging stage of the internet will reward firms that consider using safety, consent and transparency as infrastructure. The ones that fail to will be punished increasingly, redesigned, and lose their user trust across jurisdictions.

To builders, this is when they can audit systems prior to regulator audit. In the case of platforms, the time has come to harmonize AI implementation with reality (legal). To users, awareness and proactive protection is no longer an option.

Whether AI has a future will not be determined by the speed of some of the fastest movers, but rather by whether it is being constructed responsibly and provably.

FAQ

Is the production of AI sexual deepfakes illegitimate in 2026?

Yes. Non-consensual sexual deepfakes in the EU, UK and most states of the US are criminal acts.

What is so unusual about Grok that the EU is investigating him?

Due to the design of Grok, which allegedly allowed inflicting massive harm and did not help eliminate the systemic risk as per the Digital Services Act.

Are platforms liable to pay fines on the content created by AI?

Yes. Through the DSA, the fines that platforms might face are up to 6 percent of overall global turnover.

What can be done to guard their images?

Turn off privacy settings, use small profile pictures and subscribe to sites such as StopNCII.org.

Muhammad Asif is the Founder and Growth Engineer at WebNextSol, with 5 years of experience building AI-powered systems that help businesses save time, generate leads, and grow. He combines expertise in WordPress, automation, cloud architecture, and SEO to deliver practical, results-driven digital solutions.