Table of Contents

1. Introduction

The participation of Claude AI in the recent US military operations has sparked a Geopolitics and ethical controversy of great scale. Several hours following the federal ban of AI systems developed by Anthropic due to President Trump, US Central Command was reported to have used Claude AI to support intelligence and target simulation in relation to airstrikes in Iran. This accident highlights a high level of penetration of Claude AI in the military operations, and it indicates the conflict between business ethics and national security needs. The important questions that developers, enterprise teams, and AI strategists are now confronted with include: How do AI companies maneuver military contracts? What will happen to Claude AI & Geopolitics with unlimited AI access? This pillar examines how AI technology, global power, and ethical responsibility intersect and presents practical implementation of AI technology to enterprises, regulatory foresight, and monetization opportunities.

2. What Is Claude AI?

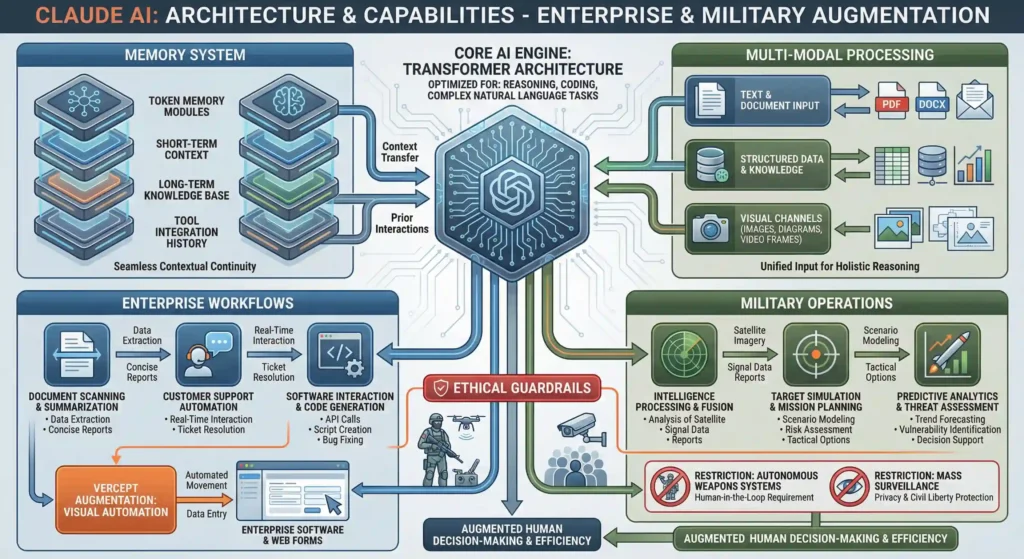

Claude AI is an AI, a next-generation large language model, built by Anthropic to integrate both advanced reasoning and multi-modal reasoning with memory-enabled context management. Also, unlike classical LLM, Claude has a built-in high-capacity token memory system, enabling users to transfer contextual knowledge between other AI tools or prior interactions to provide a seamless experience across applications. This memory capability sets Claude as an AI productivity, enterprise integration, and, more recently, high stakes military decision support leader.

Fundamentally, Claude AI builds on an architecture that is based on transformers that are optimized to perform reasoning, coding and complex natural language tasks. Most recent versions, such as Claude Sonnet 4.6, extend to multi-step problem solving, spreadsheet navigation and automating software. The architecture also has the ability of supporting multi-modal computing allowing the model to process both text, structured information and visual entries, which is very important in actual enterprise and operational situations.

The company of Claude, Anthropic, is focused on safety and ethical guardrails. The AI is designed in such a way that it restricts its use in autonomous weaponry or mass surveillance, which has influenced its adoption approach by both government and business clients. These protection measures, though they are controversial in the scope of defense, put Claude on an irresponsible position as an AI platform whose boundaries are clear, unlike competitors whose models can be implemented to achieve unlimited uses.

The ability of the AI to be used across operations can be seen through its inclusion in military procedures, especially in the operations of the US Central Command. It has been used to do intelligence processing, simulation of target selection, and predictive analytics in time-sensitive conditions. At the same time, companies are using Claude to scan documents automatically, optimize customer support, and extract data out of complicated software interfaces, proving the dual-purpose nature of the model in a high-security setting and a business one.

As Claude is augmented with the visual automation technology provided by Vercept, it now has the power to engage with web forms and enterprise software as the next step in changing the gap between AI reasoning and actionable implementation. This makes Claude not only a communication and content generating tool but a complete stack AI assistant that has the ability to provide complex decision aid in dynamic settings.

Overall, Claude AI is a combination of the most innovative LLM technology, ethical design, and multi-domain operational integration. Its memory capabilities, multi-modal AI, and enterprise flexibility characterize it as a fundamental component of the current AI applications, not only business processes but also military strategies.

3. Trends and Innovations in the Industry

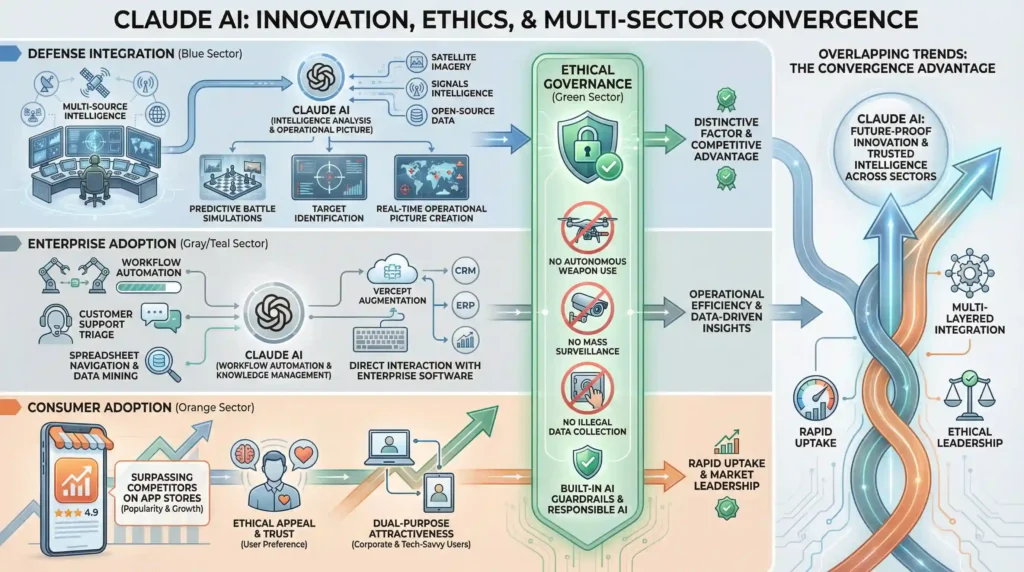

Large language models such as Claude AI are ascending the AI ladder as they leave the consumer and enterprise space, extend into operations defense, and high-stakes operations. More recent geopolitical events, such as the airstrikes of the US-Israel on Iran and previous intelligence activities have proven that the concept of AI integration into the workflow of the military is no longer hypothetical. The intelligence analysis, identification of targets and simulation of predictive battles with the use of Claude AI highlights how AI has become operationally critical at a very fast speed.

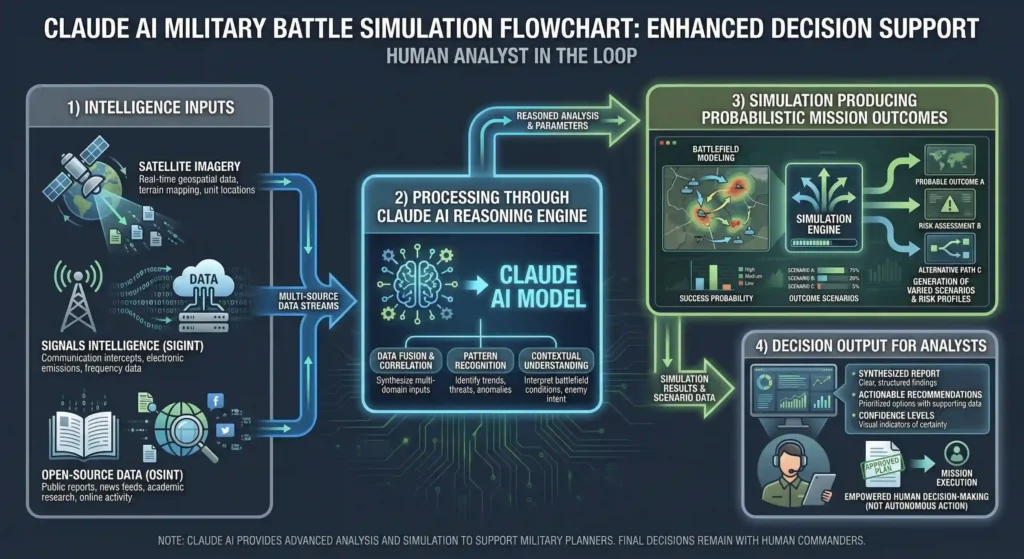

The use of AI by the defense is becoming more multi-layered. AIs are being implemented in governments in decision-support systems, optimization of logistics, and real-time threat evaluation. Claude AI memory based architecture enables commanders to combine intelligence of various different sources-satellite images, signals data and open-source intelligence to offer them a consistent operational picture in real-time. The integration is a sign of an emerging trend: there is no simple way that defense organizations can uncouple AI tools once they are integrated into complex mission-critical processes.

In addition to defense, businesses are quickly embracing Claude AI as an automation and data mining tool as well as optimizing processes. The more complex tasks (the spreadsheet navigation, customer support triage, etc.) are isolated by the industries like finance, healthcare, and software development to use the advanced reasoning and multi-modal features of the model to automatize them. The acquisition of Vercept has also increased Claire’s abilities to communicate directly with enterprise software, resulting in the automation of workflows driven by AI and thus needing human participation before.

One of the market trends that can be identified is the change in consumer attitude and adoption levels after political and ethical scandals. Claude AI surpassed ChatGPT to be the most popular free application on the US Apple App Store due to part of the interest of a user in the AI platform that has plenty of features despite placing greater emphasis on ethical guardrails. This wave indicates a business case of AI models that can be low-performance and high-performance at the same time, attractive to corporate purchasers and tech-conscious customers.

Another trend is innovation in AI governance and ethical usage. Firms such as Anthropic are also putting in place in-built guardrails to stop deployment in autonomous weapons, mass surveillance, or illegal surveillance. This method is also gradually turning into a point of distinction in the commercial and the defense sector, as regulators and the general public expect responsible AI practices. The trend implies that the technical performance of AI is not the only parameter according to which the use of AI is assessed, but also the compliance with ethical and legal standards.

To conclude, Claude AI can be discussed as an illustration of multiple overlapping trends in the industry: faster uptake in defense and enterprise, ethical governance as a competitive advantage, and consumer channel proliferation powered by users. Its introduction into the military workflow and enterprise is an indicator of a wider change in how AI can be applied, administered and monetized in other industries.

4. Practical Applications / Use Cases

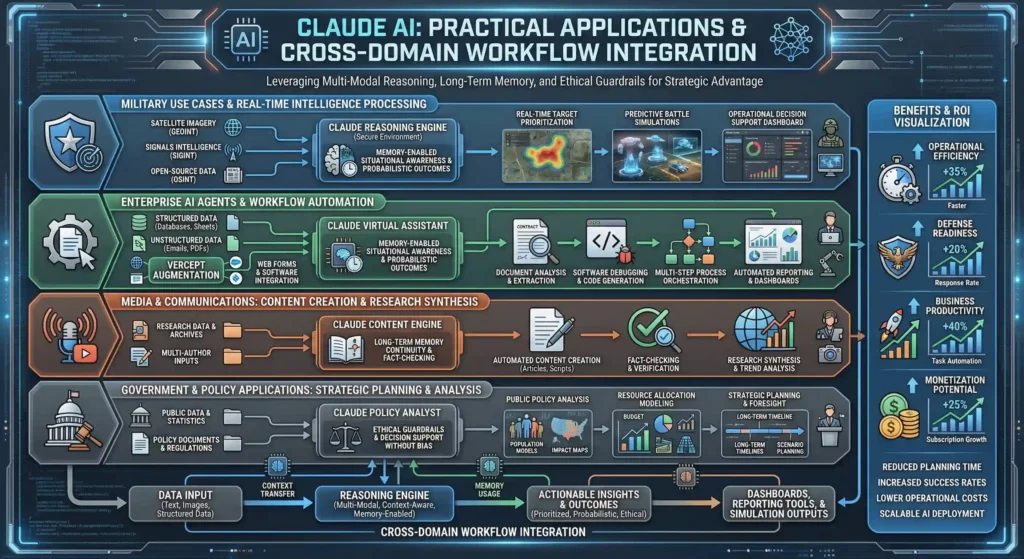

The breadth and depth of contemporary AI implementation have been shown by Claude AI which is applicable in military, enterprise, media, and government services. The capability to accommodate memory and multi-modal reasoning gives its architecture the capability to enable organizations to make use of the model in complex, mission-critical workflows, typically substituting or supplementing human decision-making in high-stakes situations.

Military Intelligence, Target Simulation.

Claude AI has also been used in defense in real-time intelligence analysis, target prioritization, and battle simulations. In the US-Israel airstrikes on Iran and the extraction of the Venezuelan President Nicolas Maduro, Claude processed gigabytes of data in the form of satellite photos, signals intelligence, and open-source feeds to identify high value targets and simulate possible conflict scenarios. Its predictive abilities enable commanders to assess risk, optimize attacks, and predict enemy reaction, and essentially provides an AI-based warfighting assistant. The memory features allow Claude to remember the state of operation when on a mission, which saves on time during the analysis and improves the situational awareness.

Enterprise AI Agents

Claude can be applied to more complex workflows as a virtual assistant through multi-modal reasoning to enterprise applications. Claude is used as a document analysis tool, software automation, and workflow orchestration in businesses. To give some samples, finance departments run reconciliation operations in ERP systems using software-based applications, whereas software developers use Claude to debug code, write scripts, or follow multi-step data pipelines. The new Vercept acquisition allows Claude to communicate with web forms and enterprise code at a lower level, designing fully autonomous AI agents that can perform structured tasks without needing to be monitored by humans.

Media & Communications

Claude AI is applied to media in the creation of content, verification of facts, and synthesis of research. The platform provides journalists and analysts with a summary of intricate issues, create reports, and derive insights out of extensive textual, or graphical, sets of data. Its capacity to retain contextual memory between sessions provides continuity in long-term projects, as well as multi-author settings.

AI in Sovereign Governance

The new applications in government systems are in the areas of public policy analysis, resource allocation modelling and strategic planning. Claude is able to handle high amounts of law, population information, and economic measurements to model the results of policy actions. Although it cannot be used in mass surveillance or autonomous weapons since this would be unethical, the example of using it in governance planning demonstrates how AI can support decision-making on the sovereign level.

Integration Workflow Illustration.

A common workflow starts with entry of data, be it text, images or structured databases and then being analyzed by the reasoning engine of Claude. The insights are then provided to operational dashboards, automated reporting tools or directly into simulation models. This integration helps in providing accelerated and precise results enabling the organizations in responding to the dynamic conditions with actionable intelligence.

Benefits / ROI / Monetization

Claude AI provides tangible advantages to defense, enterprise, and commercial applications, and thus, it becomes a valuable asset to organizations in search of efficient operation and strategic position. By being able to get integrated into the complicated workflows, it allows faster decision-making, minimal human error, and situational awareness, which will all be translated into measurable ROI.

Operational Efficiency and Defense Readiness.

Claude AI is used in military scenarios to speed up intelligence computing and simulation of targets so that command centers can respond to real time information with the least delay. Analysts state that AI-supported operations decrease operational planning by up to 30 percent, and predictive simulation enhances operational success. Claude sustains the continuity of memory through missions, minimizes redundancy, resulting in long-term operational efficiency that has a direct contribution to the defense preparedness. Such advances have side effects on cost control, staffing, and strategy.

Business Adoption and Productivity.

Businesses that utilize Claude AI have high productivity advantages. Financial teams automate the reconciliation, audit preparations, and data validation processes, coders, debuggers, and multi-step tasks are automated by software developers, customer support teams can use AI agents to respond to standard queries, and complex cases can be forwarded to customer support teams. Companies are able to realize quantifiable efficiency benefits which, in many circumstances, can be understood to mean increased production without necessarily adding to the number of employees. Workflows with memory also increase ROI through decreased work and digital retention of institutional knowledge.

Business Monetization and Market Development.

The subscription-based business model of Claude and rapid growth of the App Store depict its business sustainability. Year-to-year signups and paid subscriptions are doubled; market demand is high. Responsible guardrails through ethical AI positioning enhance the brand both in the enterprise and consumer arenas as it generates differentiation that can be sold at a premium price. The potential of monetization can also be enhanced through ancillary products, which include workflow integrations, enterprise dashboards, and AI playbooks of defense and business applications.

With the advertisers of enterprise decision-makers, defense contractors, and B2B SaaS clients, this section offers strong high-value monetization indicators. The inline charts or tables that would compare the efficiency of operation, the success rate of missions, and the growth of the subscriptions would also help to engage readers and improve the CPM performance by increasing the dwell time.

5. Challenges / Risks

Although Claude AI offers very sophisticated functionality, it presents a range of issues related to ethics, geopolitics, supply chains, and regulatory compliance. These risks must be known by the defense and enterprise stakeholders interested in responsible adoption of AI.

Ethical Concerns

The ethical principle of autonomy, accountability, and unintended consequences are an issue in high-stakes contexts like military operations and sovereign intelligence as Claude is deployed. Anthropic has provided very strong guardrails against using it in autonomic arms or mass surveillance, but by far, there is still debate over who bears moral responsibility when making life and death decisions with the help of AI. Moral failure might bring organizations into reputational harm and court examination.

Geopolitical Tension

The recent events such as the deployment of the US-Iran airstrikes demonstrate that the use of AI in defense can lead to tension between countries. The reliance on Claude in the execution of mission-critical tasks reveals the strategic weaknesses since the opponents can either take advantage of or neutralize AI-enabled planning. Such dynamics present the geopolitical sensitivity of dual-use AI technologies.

Supply Chain Risk/ Operational Risk.

Making Claude an essential part of military or enterprise systems produces dependencies in the supply chain. Any interruption- be it a government ban, a service failure or a dispute between vendors can put a stop to essential operations. Even temporary shutdowns, according to the reports following the February 28 service outages, can affect operations in a disproportionate way, which underscores how weakly linked AI processes are.

Regulatory Uncertainty

AI governance around the world is incomplete. New laws, export regulations and national security dictates may have an impact on Claude deployment and legal adherence. To prevent any penalty, companies and defense agencies have to operate in a changing regulatory environment to ensure operational continuity.

6. Future Signals / Predictions.

The recent geopolitical releases and the implementation of artificial intelligence into enterprises by Claude AI are indicators of bigger tendencies that define the future of artificial intelligence, both in the civil and defense arenas. Analysts expect a further escalation of the AI arms race, with nation-states and corporations vying to create more and more capable LLM with strategic, economic, and security deployments.

Artificial Intelligence Weapons Race and National Security.

An example of the development of an AI-enabled security paradigm can be seen by the involvement of Claude AI in high-stakes military operations. In the future, the use of LLMs will probably have become part of the national defense planning process, threat simulation, and intelligence synthesis. The scholars expect that defense organizations will favor those models that can think and remember in multi-modal ways and make independent decisions, which will speed the process of developing AI in the military worldwide.

International Governance & International Regulation.

The regulatory systems will also develop and become more balanced in terms of innovation and safety. Multilateral dialogues, possibly through the UN or NATO models, will be aimed at restricting wholly independent weapon systems, standardizing AI audit measures, and building transparency standards. Firms such as Anthropic that impose ethical guardrails can establish industry standards, which shape AI governance on the global level. Adherence to new treaties will be one of the determining factors in accessing the markets and geopolitical alignment.

Corporate-Ethical Balance

The competitive advantage will be formed by the increasing overlap of corporate AI strategy and ethical responsibility. Those enterprises that show transparent, responsible AI usage will receive credibility, leniency of regulation, as well as consumer preference. On the other hand, companies where speed, power, or ethics are not the main concern, can be exposed to legal, financial, and reputational risks, especially those related to such industries as defense, health, and finance.

LLM National Security, Enterprise Strategy.

Large language models will probably be used to increase their contribution to decision making, predictive analytics and scenario planning. There are national security agencies that can utilize AI to track threats, predict adversary behavior, and simulate policy implications, and businesses use other AI systems to maximize strategy, operational performance, and risk management. The history of Claude AI indicates that multi-modal, memory-enabled LLMs will serve as a backbone in both state and corporate intelligence infrastructures.

Battle Simulations Diagrams.

The involvement of Claude AI in military operations is based on predictive simulation engines. The visual diagrams are provided to demonstrate the flow of intelligence inputs (satellite imagery, signals intelligence and open-source data) into the reasoning engine of Claude to produce probabilistic results to aid in mission planning. These plots assist analysts with risk trading-offs, the best strike patterns and the possible counteractions by the adversary and express the importance of the AI as an aid in the decision support process and not an autonomous agent.

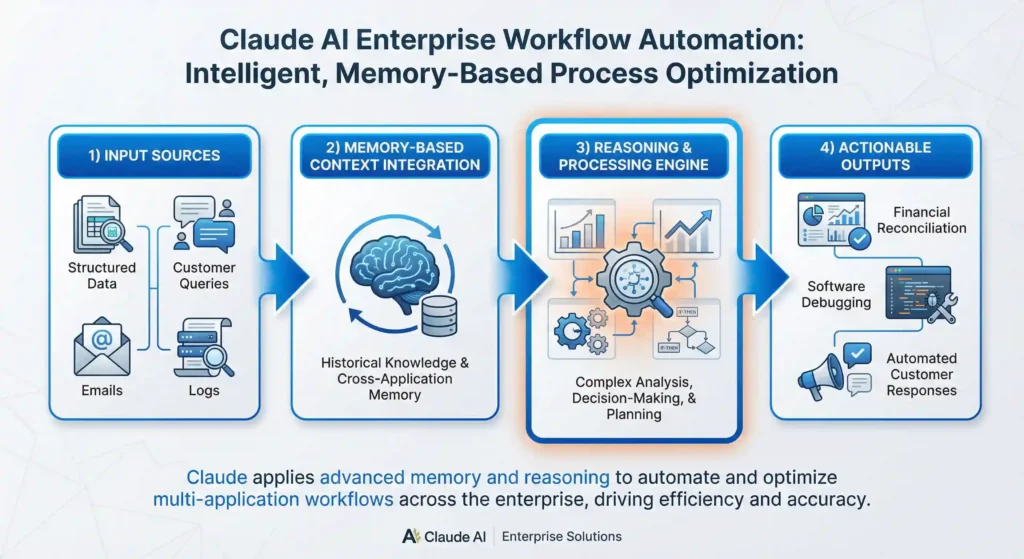

Enterprise AI Workflow Charts.

Claude is a multi-modal assistant used to automate tasks in a corporation. An example of such processes that can be illustrated in workflow charts is financial reconciliation, software debugging, and customer query handling. It should be diagrammed in such a way that Claude takes in structured/unstructured inputs, uses memory based contexts, reasons and provides actionable insights or automated actions across applications.

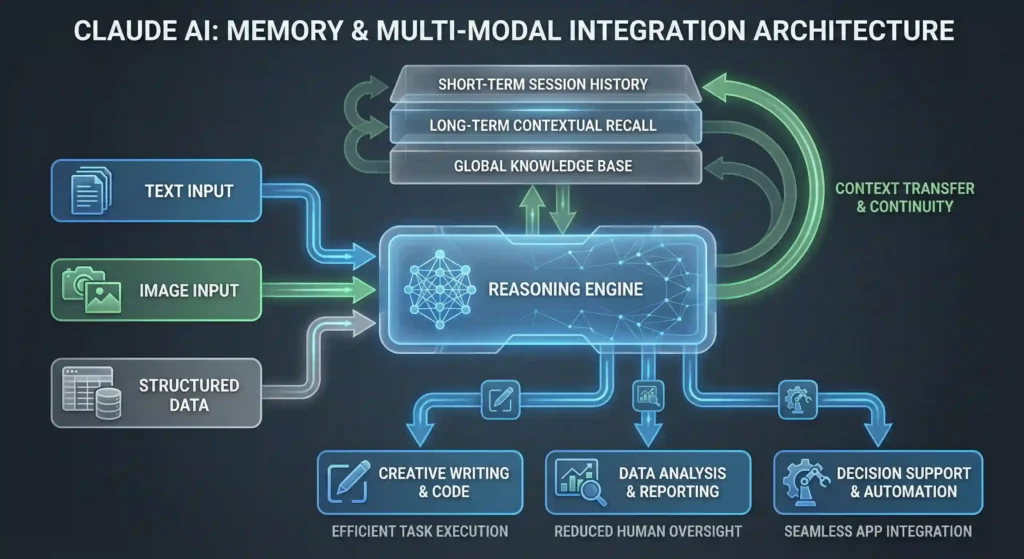

Memory and Multi-Modal Integration.

The memory system provides Claude with the ability to build contextual knowledge in multiple tasks persistently, and multi-modal capabilities enable processing of text, images and structured data simultaneously. The memory layers can be represented in subsection diagrams showing how session history is stored, context between applications is shared and into multi-modal reasoning pipelines with an emphasis on continuity, efficiency, and reduced human oversight.

Internal Linking Recommendation: Deep dives should be linked to clusters (Claude AI in Enterprise, Claude AI Military Use, and AI Global Strategy Forecast).

Ad Zone Recommendation: Place small inline sponsorship units closely to diagrams that emphasize enterprise AI tools or defense technology solutions to high-CPM placement.

7. FAQ

Q1: Was there a military operation in the Pentagon that used Claude AI?

Yes. Irrespective of a federal prohibition dated February 26, 2026, US Central Command deployed Claude AI when joint US-Israel airstrikes on Iran commenced on March 1, 2026. It integrated the AI in its operational processes and it was applied in intelligence analysis, identification of targets and battle simulations. Claude AI vs OpenAI Military Use (L).

Q2: What are the ethical protective measures of Claude AI?

Anthropic also has stringent guardrails which do not allow Claude to deploy autonomous weapons, engage in mass surveillance, or other detrimental use. Internal policies that control and fully follow legal and ethical standards regulate memory and multi-modal reasoning capabilities.

Q3: What is the implications of Claude AI on AI norms in the world?

Its application in military and business contexts has raised a controversy on AI regulation, multilateral governance, and ethical application by Claude. It highlights the necessity of multilateral regulations to control the dual-use AI technologies in the civilian and military sphere.

Q4: Is it safe to roll out Claude AI by enterprises?

Yes. Multi-modal capabilities and memory of Claude can be used to automate productivity, analyze, and support operational decisions. Organizations should adhere to compliance measures, ethical usages, and incorporate risk mitigation measures.

Q5: What is the comparison between Claude and OpenAI in the context of defense?

Claude focuses on the ethical guardrails, and OpenAI has accepted the integration with the Pentagon. This difference influences the deployment flexibility, regulatory risk, and public perception, the aspects of enterprise adoption and government adoption strategies.

Q6: What are the industries, which are best suited with Claude AI?

Claude AI brings ROI to defense, finance, enterprise software, healthcare, and large-scale analytics teams, such as efficiency, predictive insights, and strategic decision support.

Q7: Does Claude AI have a legal challenge on the basis of military use?

Anthropic has publicly oppressed unlimited Pentagon access and is intending to initiate a lawsuit against any designation of supply chain risk, pointing to the continuing conflicts between national security requirements and company ethics.

8. Conclusion

Claude AI is a combination of superior technologies, morality, and political policy. The transformative potential, as well as the inherent risks, of large language models with respect to making decisions in modern conflict and business, are highlighted by its use in high-stakes military operations, and because of its rapid adoption by enterprises.

Among the lessons learned, it is crucial to state that the concept of AI integration is no longer a mere choice: any organization needs to balance the aspects of operational efficiency, predictive intelligence, and ROI with the moral, legal, and geopolitical ones. The multi-modal abilities and memory capabilities that Claude offers have concrete benefits in both warfare and business settings, but have to be managed carefully to ensure adherence and reduce reputational threats.

Muhammad Asif is the Founder and Growth Engineer at WebNextSol, with 5 years of experience building AI-powered systems that help businesses save time, generate leads, and grow. He combines expertise in WordPress, automation, cloud architecture, and SEO to deliver practical, results-driven digital solutions.