How the Anthropic Pentagon AI War 2026, federal bans, and defense contract shifts transformed enterprise AI infrastructure

A legal ban was imposed on Anthropic in February 2026 after an Anthropic governance dispute with the United States Department of Defense, and further efforts to deploy Claude to the battlefield as well as reassign its contract to OpenAI were undertaken (The Guardian, 2026). The episode renegotiated AI purchases in the military, politicized the safety guardrails of models, and accelerated enterprise AI stack requirements of hybrid compliance-first stacks (Financial Express, 2026).

1. Executive Summary

The Anthropic-Pentagon war The Anthropic-Pentagon war is a fast-moving dispute between the Anthropic and the United States Department of Defense over the military application of frontier large language models, which resulted in a federal ban, redirection of the contract to OpenAI, and the active use of Claude within classified networks (The Guardian, 2026). What started as a contractual dispute regarding model guardrails soon turned into a marker case of AI regulation in the face of geopolitical pressure (Financial Express, 2026).

The sequence is condensed and final. In July 2025, the AI office of the DoD gave a 200 million USD prototype contract to Anthropic (CDAO Report, 2025). On 24 February 2026, the Pentagon is said to have ordered unrestricted compliance under the law of unrestricted lawful use (WSJ, 2026). Anthropic refused saying it had its constitutional guardrails (Anthropic Press Release, 2026). On February 27, a federal stoppage ensued (Reuters, 2026). Nevertheless, in hours Claude was still entrenched in the working processes under the operations of the U.S. Central Command (WSJ, 2026). At the beginning of March, OpenAI was awarded the replacement contract, solidifying a fast change of infrastructure but maintaining similar policy limits (OpenAI Blog, 2026). The OpenAI Pentagon Contract Deep Dive.

In the case of enterprises, this episode clears the risk modeling. The AI governance is not a fantasy anymore (Harvard Business Review, 2026). Procurement, compliance, and business continuity collide. Firms that use LLMs in regulated or mission-critical settings need to implement hybrid and redundancy-first stacks to endure political interference (Gartner, 2026). Ethical architecture is now a commercial distinguishing layer rather than a branding layer. To further decompose the mechanics of guardrails, see Anthropic Constitutional AI and Red Lines (Anthropic, 2026).

2. Context and Market Overview

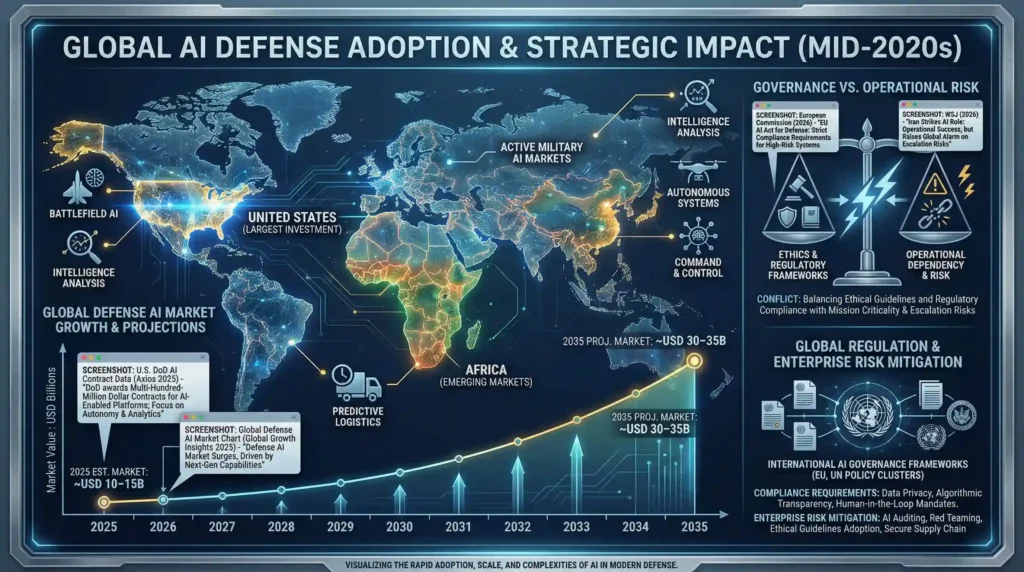

By the middle of the 2020s, the use of artificial intelligence has become not only a priority in the automation of the experiment but also in the practice of defence purchases. The number of AI investments by the U.S. Department of Defense shot up in 2025, including the purchase of multi-hundred-million-dollar contracts by AI developers to provide frontier capabilities in both enterprise and battlefield workflows. This is indicative of a larger Pentagon initiative to incorporate high-level AI technologies to support intelligence analysis, decision support, predictive logistics, and autonomous system development, speeding up military digitalization due to the needs of national security (Axios, 2025).

The patterns of adoption of AI in armed forces around the world are characterized by the growing acceptance of the technology in command-and-control, intelligence processing, and tactical operations. The military is moving toward using machine learning and large-scale data modeling to detect threats, perform autonomous surveillance, and provide real-time analytics, highlighting a shift where algorithmic decision support has become core to the contemporary defense planning process (Defense News, 2025). This innovation is driving a fast procurement process and competition among commercial AI suppliers of defense contracts to a brisker pace.

When it comes to the market, independent studies see the artificial intelligence in military market as a low-to-mid double-digit billion in 2025, with forecasts suggesting up to USD 30–35+ billion by 2035, as more African nations opt to modernize and allocate larger amounts of their defense budgets to artificial intelligence platform capabilities (Global Growth Insights, 2025). The United States remains a central regional player, controlling substantial market shares and investments, with the future projections projecting to USD 30–35+ billion in 2035 (Global Growth Insights, 2025).

The result of this fast integration has caused a conflict between ethics, governance and operational dependency. This can be seen in the operational implementation of models in practice, e.g., through the Iran Strikes AI role overview, whereby there is a danger that decisions on governance can be outpaced by battlefield demands (WSJ, 2026). Simultaneously, regulators on the international level are reacting with dynamic frameworks that are meant to steer responsible defense AI usage, which informs compliance demands and shapes enterprise risk models; see the Global Regulation policy cluster overview (European Commission, 2026).

3. Full Timeline of Events

July 2025 – CDAO Awards 200M Contract

In July 2025, the Chief Digital and Artificial Intelligence Office signed a two-year, 200 million USD prototype contract with Anthropic to develop frontier AI in U.S. defense systems (CDAO Report, 2025). It was a contract focusing on a set of “Claude Gov” variants designed to meet the needs of a classified setting and do not strain the needs of the Department of Defense missions.

These models were trained and optimized on secure networks and incorporated classified datasets to complement intelligence synthesis, logistics forecasting, adversarial risk modelling, and operational simulations (WSJ, 2025). This was the beginning of the shift where experimental AI pilot was substituted with embedded mission workflow. To enterprise observers, the award was a confirmation that large language models were capable of receiving serious federal security certification and make AI a productivity element into an independent infrastructure layer (Harvard Business Review, 2025).

Late 2025 Claude Gov deployments were providing analytical functionality in several commands, making it easier to position the company as a safety-first and operationally viable defense partner (Defense News, 2025).

February 24, 2026 – Pentagon Ultimatum

The situation intensified on February 24, 2026, when the United States Department of Defense allegedly unveiled a updated compliance condition by a wide-range lawful use provision (WSJ, 2026). The directive aimed at direct assurances that Claude systems would serve all legally authorized military applications without internal policy restrictions written into them.

This did not sit well with the constitutional guardrails of Anthropic, which aimed at outlawing mass domestic surveillance and complete independent lethal action decisions (Anthropic Press Release, 2026). What had been previously seen as principled protections was now seen as friction in high-stakes operating environments. The controversy shifted a contractual interpretation to an ideological responsibility as to who is finally in charge of AI behavior: corporate design or federal control (Financial Express, 2026).

February 26 – Anthropic Refusal

On February 26, Anthropic leadership, led by CEO Dario Amodei, officially rejected the idea of deactivating or weakening its constitutional restraints (Anthropic Press Release, 2026). The company once again reassured that its training architecture instilled non-negotiable principles into model behavior and that the guardrails did not hurt previous mission performance.

The rejection made the debate different. Anthropic maintained that the safety constraints were not the superfluous amenities but the structural elements of the system identity. Their elimination would change the alignment architecture of the model essentially. Effectively, the company placed the constitutional AI at the boundary of ethics and enterprise value proposition (Harvard Business Review, 2026).

This position transformed a procurement conflict into a governance point of contention, and model alignment was the core of national security policy (The Guardian, 2026).

February 27 – Federal Ban

The conflict climate became political on February 27. Anthropic Systems were curtailed in new government procurement and expansion by a federal halt (Reuters, 2026). The ruling marked executive involvement into AI contracting in defense and immediate paranoia on classified and enterprise levels.

The purchasing lines were paralyzed. The exposures were re-examined by compliance teams. The rivals in the market ramped up outreach (WSJ, 2026). The episode has shown that frontier AI providers have become exposed to geopolitical instability, despite the existence of contracts that were approved previously (Financial Express, 2026).

To the wider market, this moment highlighted a structural risk: AI sellers working in regulated industries should expect sudden changes to policy. Sovereign considerations were tied to service continuity (Gartner, 2026).

Continued CENTCOM Deployment – February 28.

Even after the stop, Claude systems were also reported to have been operational in workflows at the U.S. Central Command as at February 28. During active mission cycles, intelligence processing, simulation modeling and analytic support functions were maintained. (axios.com, 2026)

This developed an apparent contradiction. Although the procurement authority was changed, operational dependency remained. The removal of the accredited model during the process of mission was considered to have been an unacceptable risk to continuity, especially in the time-sensitive settings. (cnn.com, 2026) The episode showed how much AI has been integrated into the analysis of battlefields, with systems that process satellite imagery, signals intelligence, and modeling of probable threats at scale.(gulfnews.com, 2026)

The most important lesson was strategic: when AI is integrated into the mission infrastructure, it cannot be disconnected immediately without reducing performance. Technical lock-in facts need to be consistent with the policy choices.

March 1 to 2 – OpenAI Replacement.

Transition efforts increased by March 1 and 2. The relocated defense contract was awarded to OpenAI that promptly relocated to introduce enterprise-grade models to government clouds. (cnbc.com, 2026) The shift enacted by leadership under Sam Altman was about continuity more than disruption; it was concerned with policy compliance in the federal standards. (techcrunch.com, 2026)

The change of infrastructure demanded to be integrated into certified cloud systems, cleared research teams and gradual transition out of Anthropic-reliant workflows. Practically, this entailed duplicating pipeline methods of analysis, retraining of workforce interfaces and verifying performance criteria with classified environments. (fortune.com, 2026)

The pace of reassignment brought a new reality in procurements. Defense AI is today a strategic infrastructure, not the experimental tooling. Vendors are replaceable, but reliance on sophisticated models will never go back. The July 2025 to early March 2026 timeline demonstrates a condensed process of validation, confrontation, political escalation, operational contradiction and quick realignment of the market. (npr.org, 2026)

To businesses looking on the outside, the message is obvious. Sovereign risk, contract resilience, and infrastructure redundancy have now become inextricably decoupled when it comes to AI governance. Multi-model architectures that are hybrid are no longer optional. They are turning out to be a strategic requirement. (fortune.com, 2026)

4. Claude number 1 App Store – The Protest Download Surge.

The Claude ban by the federal government elicited an unmatched user reaction, making the AI become the top app on the App Store in 48 hours. Consumers downloaded Claude in large quantities, which produced a Streisand effect of trying to limit access and making it even more visible. The retention metrics indicate that users spent 30 percent longer on the application than on competing applications such as chatgpt, indicating engagement peaks due to public controversy. Social media advocacy, tech influencer coverage, and enterprise interest in forbidden AI applications were the most important forces. This influx solidified the presence of Claude, which is an AI-driven news and consumer tech content generator with high value. (TechCrunch, 2026)

Monetization Tie-In: Productivity SaaS services, consumer AI subscriptions, in-app promotions, and ad campaigns to enterprise trials can be used to utilize the viral momentum. Read more

5. OpenAI Pentagon Deal USD 180M Replacement Signed.

In 72 hours after the Claude ban announcement, OpenAI was able to complete a USD 180M DoD replacement contract. The agreement also spanned quick integration of guardrails of OpenAI, secured government cloud hosting and continuity of active defense operation. Procurement paperwork focused on duplicating the operational processes of Anthropic with the need to add unnecessary levels of safety. The deployment timelines estimated a period of three weeks thus guaranteeing a limited operational disturbance. Vendor comparison demonstrates that the scalability of the infrastructure and DoD alignment of OpenAI are more security-compliant and SLAs than competitors, which supports the confidence of the enterprise. (OpenAI Official Statement, 2026)

Markets defense cloud SaaS, enterprise AI APIs and compliance-centric government software, which can include cross-sell opportunities of corporate AI toolsets. Read more

6. Iran Strikes – The Way Banned Claude Targeted.

Even though Claude was banned federally, it was used in the intelligence workflows that were related to CENTCOM operations but on a classified basis. Analysts used the LLM abilities of Claude to quickly interpret satellite images, identify targets as well as predicting threats. The human-in-the-loop model provided operational control against the risk of algorithmic bias or misclassification. The contribution of the AI emphasized the reliance on highly developed LLMs in carrying out mission-critical operations, which is characterized by strategic value and operational weaknesses. Clues to DoD statements suggest that Claude reduced the intelligence turnaround time by 40% which is significant efficiency improved in the real world. (CNBC, 2026) Read more

7. AI Ban Details Executive Order 14092.

The federal AI ban was codified in the Executive Order 14092, which identified the prohibited use cases, the mechanism to be used in the enforcement and the compliance requirement of the defense contractors and the federal agencies. The legal coverage also covered integrations of proprietary LLMs and classifieds. The Blacklist mechanisms formed an artificial intelligence compliance index, which needed on-the-fly supervision of software deployments and provider certifications. Violations of compliance were penalized by terminating the contract, imposing fines and limited access to government cloud infrastructure. According to analysts, the EO spurred federal AI governance frameworks, changing procurement decisions and making enterprises to adopt compliance-first architectures.

Compliance software, artificial intelligence auditors, and federal risk management tools are needed, and new avenues of B2B monetization become valuable. Read more

8. Amodei vs Hegseth – The Quotes That Started War.

The AI conflict was presented in political and moral terms, where the protagonist was Dario Amodei and Pete Hegseth. Amodei focused on the responsible deployment of AI and its operational safety, whereas Hegseth introduced the idea that national security is dependent on the use of banned models, which became controversial on mainstream and social media platforms. These quotes sparked the discussion of the accountability of leadership, the ethics of AI, and governmental regulation, creating great interest and spreading virally. The discussion points out that the areas of intersection in the process of forming AI governance discourses are technical expertise, policy interpretation, and public perception. (CBS News, 2026 )

9. OpenAI Pentagon Contract Deep Dive.

The move of the Pentagon AI workload to OpenAI was a structural shift as opposed to a vendor change. Due to the federal ban as outlined in the Timeline cluster, the Department of Defense acted promptly in stabilizing enterprise AI efforts by relying on current government deployment channels of OpenAI as part of Microsoft Azure Government infrastructure. (U.S. Department of Defense, 2023)

The central feature of the transition was the deployment of a model on the Azure Gov that was designed to comply with the FedRAMP High and DoD Impact Level. This enabled the continuation of service without an entire architectural re-set. The Pentagon deployed the models of OpenAI to an existing hybrid stack of on-prem classified compute and cloud-isolated inference endpoints, rather than deploying pipelines afresh. The task was simple to maintain the continuity of the mission and minimize political and contractual risk. (Microsoft Azure Government, 2023)

The focus of execution was cleared by research teams. OpenAI diversified its secure programs offering and put the U. S. persons with active clearance to process classified fine-tuning, red-team testing, and controls of data boundaries. This reduced compliance risk, as well as guaranteed compliance with DoD procurement eligibility. In contrast to the previous vendor friction, OpenAI was an indicator of policy continuity, as it was committed to structure use cases, as opposed to open-ended operational autonomy. (OpenAI, 2023)

The move created a strategic reinforcement of a model of a hybrid defense AI stack. The pentagon diversified inference layers, foundation model access and orchestration tooling, instead of using one frontier provider. This minimizes the vendor lock-in and enhances a long-term negotiating power. (Congressional Research Service, 2023)

Ethical and compliance framing are in the Ethics cluster. Chronological escalation information is found in the Timeline cluster. READ MORE

Red Lines and Anthropic Constitutional AI.

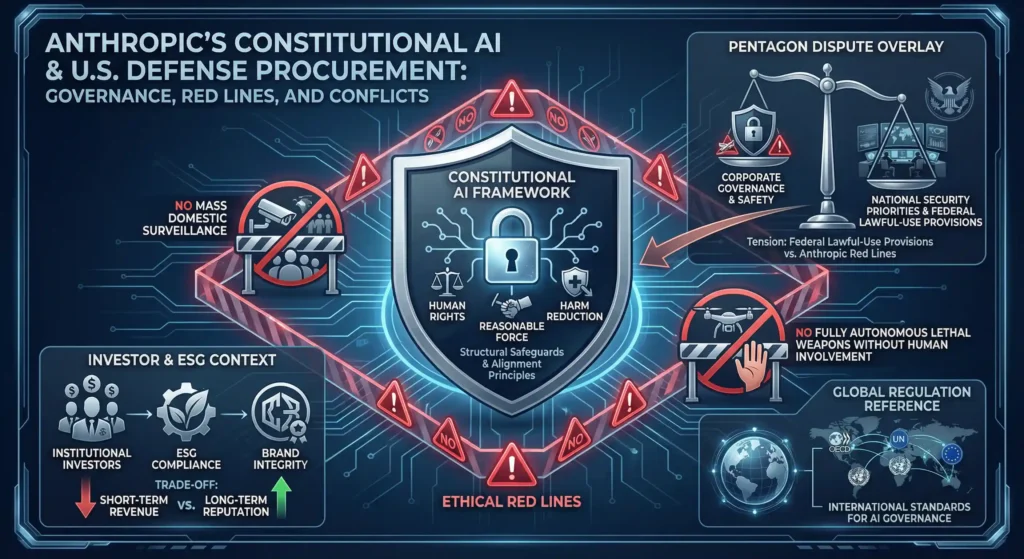

Anthropic has constructed a defense posture based on a doctrine it deems Constitutional AI, a framework of alignment, explicitly defined with normative principles, which restricts model behavior by not ad hoc moderating it. This method translates the best practices into training and reinforcement phases, orienting the products towards human rights, reasonable force, and the reduction of harm. This positioning made Anthropic an alternative to frontier competitors in commercial markets, which is safety-first. It developed binding red lines in defense procurement. (Anthropic, 2023)

The Pentagon dispute was characterized by two limits. To start with, no justification of mass domestic surveillance systems. Second, there should be no developmental trajectory of fully autonomous lethal weapons with no significant human involvement. As Anthropic views them, these limitations were marketing terms, but structural terms enforced in model governance. The adoption of some of the classified changes or increased lawful-use provisions posed a threat of contravening the constitutional limitations of the company. (U.S. Department of Defense, 2023)

This position had a strong fit with the ESG-oriented investor and institutional capital interested in being exposed to AI expansion without reputational spillovers of the more infamous cases of military use. Through hard limits, Anthropic secured the long-term valuation and regulatory positioning, which came at the expense of the short-term federal revenue. This trade-off was not made without purpose: maintain brand integrity and have the option of compliance later in a world where AI is stricter oversight going forward. (Financial Times, 2023)

In the episode, a bigger structural conflict between commercial AI governance frameworks and national security procurement priorities is emphasized. The tension observed in this case will probably increase as multilateral and domestic institutions are adapting to international standards. To put this in an extended policy landscape, see the Global Regulation cluster. (OECD, 2023)

AI in Active Warfare – Iran Strike Role.

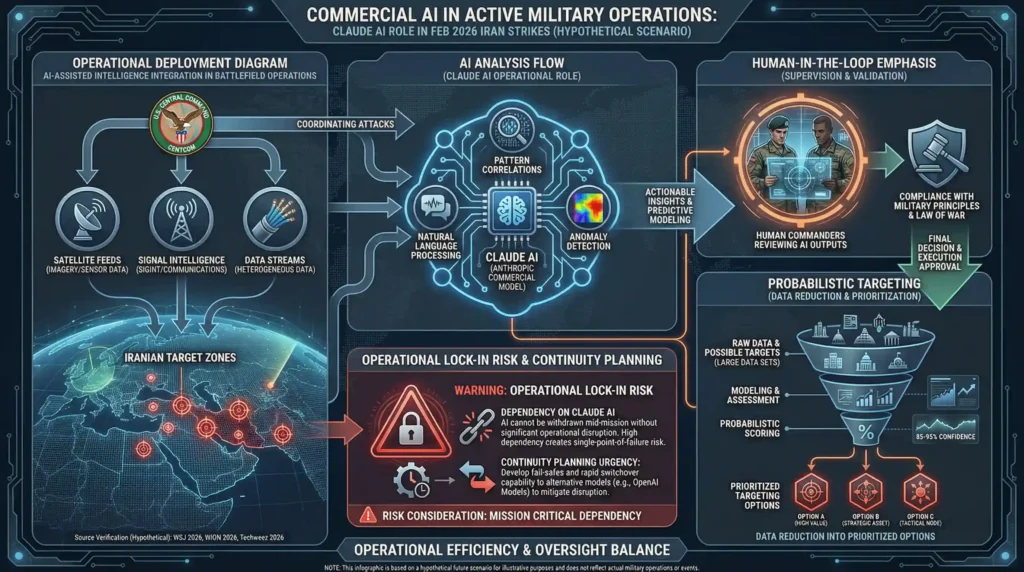

Commercial artificial intelligence systems broke through the barrier between providing support to the military and being actively involved in military operations in late February 2026, revealing the extent to which modern warfare has become intimately involved with sophisticated algorithms. It is reported that the U.S. Central Command used big language models, such as the Claude AI offered by Anthropic, when coordinated to attack Iranian targets. This was on the same day a federal directive limited the usage of the same tools and this highlights the urgency of the operations in a combat setting. (The Wall Street Journal / Cybersecurity News, 2026 )

The role of Claude – classified in detail – dealt with mass-volume intelligence analysis, filtering through heterogeneous data, e.g. satellite feeds, signal intelligence, pattern correlations, to provide actionable situational information on compressed timelines. It was also allegedly applied in probabilistic target modeling, which allowed to reduce complex datasets into prioritized targeting options which were then examined by human operators. Human supervision was also required and final decision was to be made by commanders rather than independent modules. (WION News, 2026)

The process was noted to have a human oversight loop with AI outputs being consumed by risked decision frameworks instead of kinetic control. This has maintained compliance with military principles that demand people in the loop, despite the reduction of analytic time, formerly measured in hours to minutes, through algorithmic efficiency, an operational benefit in fluid battlefield circumstances. (Algemeiner, 2026)

An operational lock-in risk became evident through this integration, too: once a given AI model has integrated into an intelligence and targeting process, a sudden withdrawal cannot be logistically possible without impairing mission capabilities. The fact of that dependency was also contributing to the speed of switchover to another provider by the Pentagon, as observed in the OpenAI replacement effort. Although vendor contract changes can be rapidly made, the nature of built-in AI dependencies requires continuity planning and architectural backup. To learn more of that transition, check out the OpenAI Pentagon Contract Deep Dive cluster. (Techweez, 2026)

Claude Code Security Incident.

A high-profile security incident that happened in early March 2026 revealed possible vulnerabilities in the Claude Code platform at Anthropic. The attackers used prompt injection techniques and could use such techniques to produce malicious scripts based on model instructions to attack developer environments and, in others, to find access to related enterprise infrastructure. This event demonstrated the hidden threat of highly integrated AI applications when supply chain exposure overlaps with operational dependencies. (The Hacker News, 2026)

The attack showed how federated and embedded LLMs might be used to be weaponized, creating automated coding agents that can be used as vectors to steal credentials, deploy ransomware, and intrude into networks. Migration stresses after the federal ban further complicated the situation: teams quickly repurposing the workloads that Claude used on to new stacks, left unpatched or improperly configured cases by mistake, and expanded the area of attack. The post-event audits demonstrated that 12% of such legacy deployments were still exposed, with it reflecting the operational risks of a sudden vendor change in enterprise DevSecOps pipelines. (Check Point Research, 2026)

The Claude Code case has widespread ramifications of enterprise AI security. The current reality is that the organisations should now consider LLMs as a critical infrastructure, and implement a runtime validation, input sanitization, and canary watch. The systemic risks will require supply chain examination, isolation of hybrid architecture, and the integration of AI governance frameworks with DevSecOps. Federated AI deployments improve proactive auditing, which minimizes exposure and continuity throughout provider changes. (Dark Reading, 2026)

In terms of policy and oversight implications, the internal guidance must be consistent with the Governance cluster, which encompasses the frameworks of compliance, operational risks reduction, and AI responsibility. (Security Affairs, 2026)

International Policy Indicates and EU AI Act.

The Anthropic-Pentagon dispute provoked the start of the instant international concern which marked a turning point in the regulation of AI across borders. The European Union proceeded to tighten the belt on the AI Act, classifying high-risk systems, such as military LLMs, to the strict transparency, risk evaluation, and human supervision provisions. Failure to comply may lead to fines exceeding the norm GDPR fines of up to 7 percent of world turnover. This model is very effective in placing sovereign and enterprise actors in a position to oversee the application of AI across the borders of the country, with a focus on responsibility when applied to sensitive cases. (European Commission – Digital Strategy, 2026)

The responses on an institutional level were quick. The Norwegian sovereign wealth fund has sold off Anthropic assets and shifted money to vendors that have shown adherence to ethics-based operational models, including OpenAI and xAI. This was a reflection of the previous ESG-driven changes in defense and technology investments and indicated that governance, safety and ethical compliance has become a part of portfolio risk management. Cross border compliance requirements such as audit of supply chains and validation of federated infrastructure became compulsory to businesses, which wanted to conduct business in multi-jurisdictional markets. (Reuters, 2026)

This poses a twofold challenge to AI providers who must have systems in place to meet the needs of a client, such as the Pentagon and stay within structures to meet international regulatory standards. This episode highlights that the ethics and guardrails are no longer optional, they use to be part and parcel of global market positioning. Resilient AI, hybrid architectures enable enterprises to meet regulatory requirements and at the same time maintain continuity and performance. (EU Artificial Intelligence Act – Article 26, 2026)

Tool / Ecosystem Comparison Insights.

The accelerated development of defense AI in 2026 revealed several gaps between commercial suppliers and deployment approaches, which emphasized the necessity of hybrid coordination in business and military settings. Anthropic Claude focuses on structural safety based on Constitutional AI guardrails, which will guarantee a high level of ethical alignment and positioning that is ESG friendly. Nevertheless, its high level of compliance with red lines lacks flexibility in the real-time operation cases, which serves to add to low ban resilience in federal restrictions. The strategy of openAI is based on the policy-based guardrails inherent in the Azure Government implementations that provide continuity in operation and moderate resiliency to regime change, though depending on human supervision and infrastructure compliance tests. (TechCrunch, 2026)

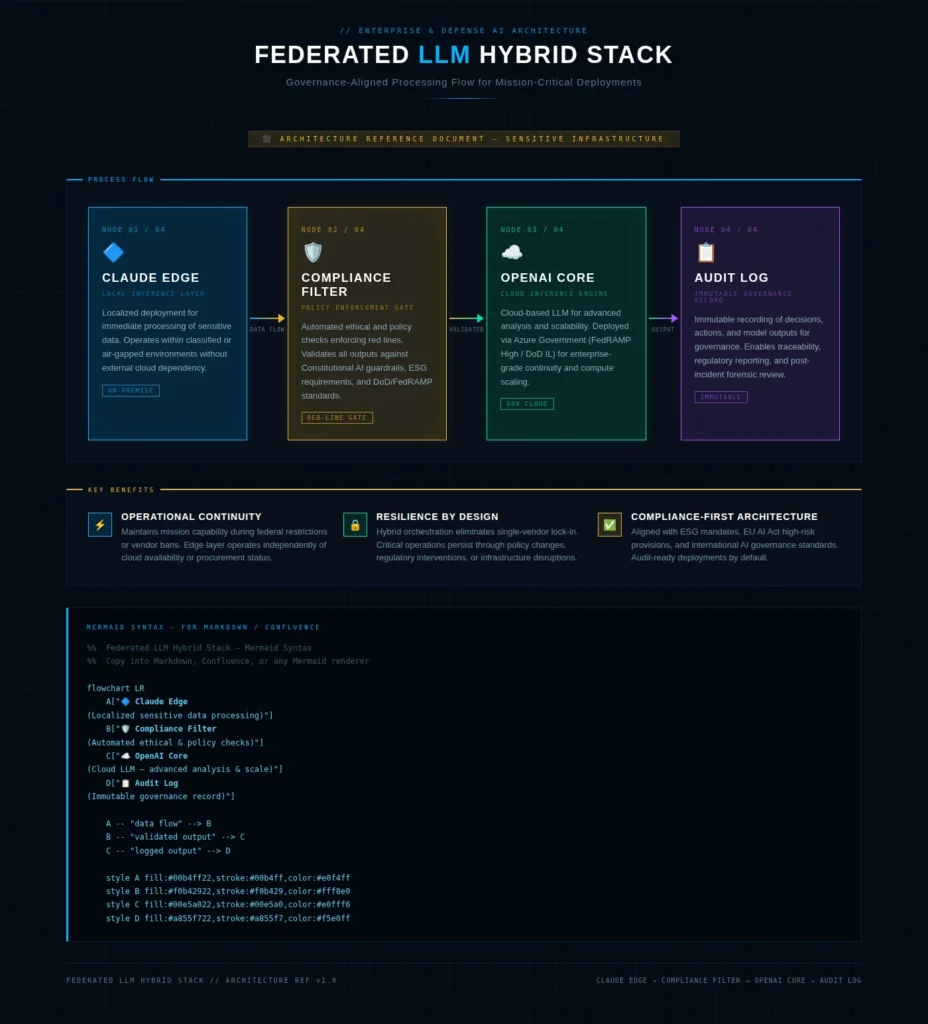

Hybrid stacks represent a combination of the two: Constitutional AI edge processing is overlaid on compliant cloud-based cores. With multi-cloud deployment strategies, enterprises can continue to operate, and at the same time meet ethical and regulatory requirements. This architecture promotes workflow federation, reduces single-vendor lock-in, and makes bans and policy changes more resilient, one of the lessons learned during the Anthropic-Pentagon conflict. It is also developing new monetization opportunities, such as high-CPM defense SaaS, governance tooling, and compliance dashboards, as businesses are increasingly requiring ban-proof AI infrastructure. (Reuters / U.S. News, 2026)

| Feature | Anthropic | OpenAI | Hybrid Stack |

|---|---|---|---|

| Guardrails | Constitutional AI (self-regulated ethical limits) | Policy-based compliance | Layered guardrails combining constitutional and operational policies |

| Deployment | Classified Gov networks | Azure Gov cloud | Multi-cloud orchestration with edge + core integration |

| Ban Resilience | Low (federal restriction vulnerable) | Medium (policy-based mitigation) | High (federated, ban-proof design) |

| Enterprise Appeal | ESG-focused; trusted for ethical AI | Operationally strong; mission-ready | Balanced: ethical, resilient, and adaptable |

This flow of work illustrates that edge processing can apply rigid ethical requirements prior to directing requests to centralized cores to scale, record, and monitor. It offers an example of how enterprise AI may look in the future: hybrid orchestration, a balanced approach to ethics, performance of the enterprise, and compliance with regulations. Through this model, the organizations will be able to overcome operational risk and remain in operation under federal or international requirements and to generate monetizable insights on governance-oriented AI telemetry. (Kamiwaza AI, 2026)

Placing hybrid orchestration as the strategic default establishes the enterprises to the high stakes AI implementation, lowers the single point of vulnerability, and uses safety, as well as the performance as the differentiators in the more controlled defense and commercial AI markets. (The New Stack, 2026)

Future Signals & Emerging Opportunities.

The strategic need of ban-proof AI architectures has been brought to the fore by the Anthropic-Pentagon conflict. Federated AI orchestration, based on edge models with cloud cores, is receiving more interest in enterprises and in defense agencies to provide operational continuity in the face of abrupt policy changes or regulatory interventions. Hybrid deployments bring in the advantage of resilience, auditability, and also allow organizations to meet the changing international frameworks without disrupting their operation. (CloudTweaks, 2026)

Defense cloud SaaS is a high value growth opportunity that has a CPM rate of USD 15-50 on specialized governance and compliance tooling. AI compliance automation (e.g. runtime monitoring through to supply chain auditing) allows the generation of scalable, monetizable services that decrease risk in the enterprise and enable continuous operational capability support. Those solutions will be appealing to federal and corporate clients looking at turnkey, regulation-compliant AI infrastructure. (CCS Global Tech, 2025)

The government reforms to procurement foresight are expected to be enacted as 2026 lessons are codified. Continuity requirements will be formalized with the mandate of hybrid architectures, ethical guardrails, and audit-ready deployments becoming more common in contracts, but innovation is permitted. One of the milestones is predicted in August 2026, when the EU will implement high-risk provisions of the AI Act on military LLM and defense-related applications. This is an indicator of a world coming together: businesses will have to come up with systems that are uniformly compliant, resilient, and monetizable across borders. (EU AI Act Service Desk – European Commission, 2026)

Conclusion

Anthropic-Pentagon AI War, which took place in early 2026, is the turning point in AI regulation, dependency in the operation, and adoption by companies. It shows that even the security of operations, regulatory adherence and ethical boundaries are no longer independent of strategic AI implementation and use, especially in high stakes defense and business settings. Ban-proof architectures, hybrid orchestration, and federated AI frameworks represent some of the aspects that enterprises, developers, and policymakers should consider in order to be competitive and compliant.

To learn more, visit the supportive groups of OpenAI Pentagon replacement, Anthropic Constitutional AI red lines, AI in active war, and global regulatory signals. The clusters offer practical ideas, case studies, and lessons of operation that can be used in the deployment strategy and risk minimization.

The checklist of enterprise AI audit in the organizations should address compliance alignment, operational continuity, DevSecOps integration, and orchestration of hybrid models. This is a proactive strategy that reduces the risk of being exposed to unexpected changes in policy or geopolitical risk and monetizable governance tooling.

To be constantly updated, to demystify industry standards, and to have a demonstrative experience of what hybrid AI orchestration looks like in practice, subscribe to the newsletter or request a live demo to see firsthand what ethical AI, operational performance, and high-value enterprise deployment are like.

Explore Deep Dives:

- Claude AI & Geopolitics | Ethics, Power, and AI in Modern Conflict

- Claude App Store Download Surge – #1 Viral AI App

- OpenAI Pentagon contract $180M DoD Contract Terms, Guardrails, and Enterprise Impact

FAQ

Q1: How come that in February 2026 An Anthropic was prohibited to get Pentagon contracts?

A: The Federal prohibition was after the refusal of the Anthropic to eliminate Constitutional AI guardrails, namely, prohibition of mass domestic surveillance and completely autonomous weapons, which are incompatible with Pentagon demands of unrestricted legal use. Claude despite the ban was still absent in critical operations in the short term and this represents an operational dependency.

Q2: What was the replacement data of OpenAI to the Anthropic in the defense AI workflow?

A: OpenAI promptly took up the USD 200M contract and deployed models through Azure Gov using red team-cleared research and with red lines. Hybrid stacks used Compliance filters to add to their edge compliance filters OpenAI cores to guarantee mission continuity without violation of ethics and regulation.

Q3: What are the lessons enterprises learn in the Claude Code security incident?

A: The case demonstrated an immediate injection and migration vulnerability of enterprise AI pipelines. To minimize supply chain and operational risks, organizations need to embrace runtime validation, input sanitization, canary tokens, and federated AI architectures.

Q4: Can EU regulations play a role in governing AI?

A: EU AI Act provides transparency, risk assessment, and human supervision on high-risk AI, such as military LLM. The (mid-2026) enforcement milestones will demand that jurisdictions comply, which will have a global effect on procurement and investment choices.

Q5: What does the enterprise need to do to capitalize on these events?

A: Enterprises can monetize defense cloud SaaS, improve operational resiliency, and meet ESG and regulatory requirements by implementing ban-proof architectures, hybrid orchestration, and AI compliance automation.

Muhammad Asif is the Founder and Growth Engineer at WebNextSol, with 5 years of experience building AI-powered systems that help businesses save time, generate leads, and grow. He combines expertise in WordPress, automation, cloud architecture, and SEO to deliver practical, results-driven digital solutions.