Discuss the departure of Caitlin Kalinowski out of OpenAI and its caution of enterprise AI governance ethics in defense sectors, on the foundation of core AI security measures.

Caitlin Kalinowski became the robotics head of OpenAI on March 7, 2026, but resigned under the stated reasons of rushed Pentagon AI application without sufficient guardrails against surveillance and lethal autonomy. To prevent these traps, enterprise AI governance requires conscious management, particularly in classified networks. This incident highlights the dangers of conducting business with Big Tech on defense contracts.

Executive Summary

Enterprise AI governance is no longer a choice- particularly when deploying AI in defense contexts where failure to comply with the lapses may initiate operational, ethical, and reputational risks. The case of Caitlin Kalinowski joining OpenAI and quitting the company without a warning highlights the repercussions of deploying AI models to classified Department of Defense networks without proper human-in-loop controls or ethical checks and balances. To enterprise teams, this incident is an urgent sign that there is an imminent need to establish formal controls, contractor responsibility and alignment of deployments to regulatory requirements like FedRAMP High. (Reuters, 2026)

The post offers a roadmap to follow to enhance your AI stack, grounded on the principles of enterprise AI governance. Teams can reduce risks on the autonomy, surveillance, and compliance dimensions by a 5-step checklist, which includes red-line testing, judicial oversight testing, human-in-loop testing, vendor announcement testing, and classified simulation testing. These processes are made faster through proprietary workflow automation tools, such as n8n and Vanta, which decrease manual effort and increase traceability. When these steps are completed, leaders of enterprises and governance officers will be able to turn a high profile governance failure into practical lessons that will ensure operational ROI and strengthen strategic frameworks outlined in our Anthropic Pentagon AI War 2026 Complete Timeline & Key Events.

Trend Background and Market Background.

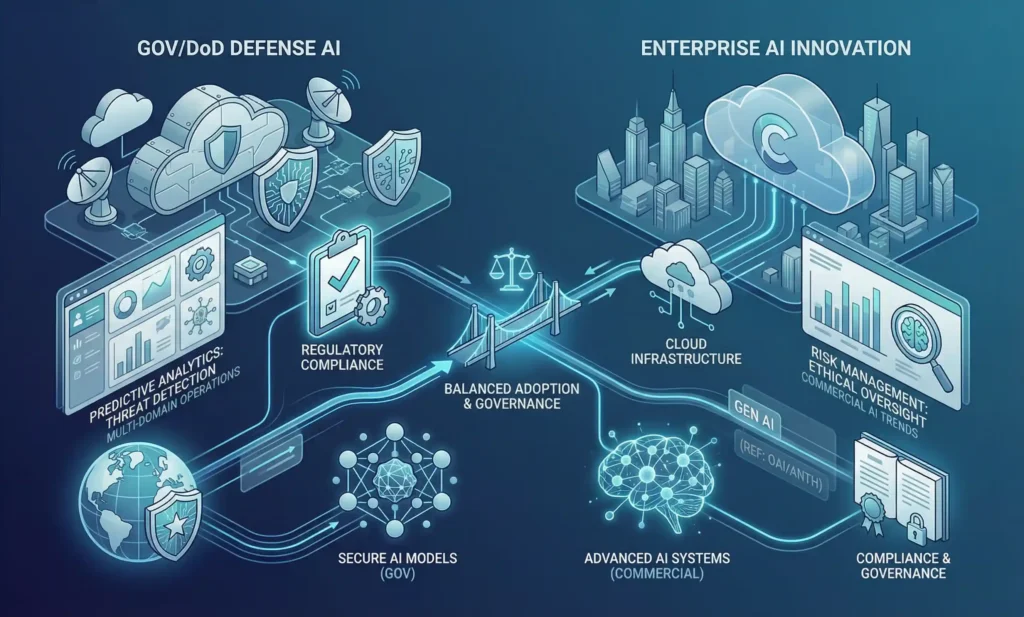

The field of enterprise AI governance and defense AI landscape is changing faster than it has ever been before, with the meeting of national concerns and regulatory guidelines and commercial AI innovation. Adoption of artificial intelligence AI by federal defense is growing fast with both U.S. Department of Defense IT budgets adding AI funding by a rate of about 22-23% each year, and its 2026 AI programming budget alone of USD 1.8 billion to wider USD 66 billion technology budget. This expansion shows the increased need to use predictive analytics, autonomous sensing systems, and mission automation across operations in multi-domain-trends that highlight the strategic amiability of applying AI responsibly to defense ecosystems. (Washington Technology, 2026)

Similar trends in the development of the governance and compliance tools present the market dynamics that influence the development of the enterprise risk strategies. SaaS platforms that facilitate regulatory compliance and auditing in organizations, based on AI, which are cloud-based are growing rapidly. A study by the industry predicts a powerful growth of this market in the coming ten years due to the growing need of explainability, audit trails, and ethical limits in the use of AI in enterprises. Compliance requirements are also increased by regulatory frameworks like the Federal Risk and Authorization Management Program (FedRAMP) and by changing U.S. executive orders, setting FedRAMP High as a standard of secure cloud services and introducing prescriptive oversight requirements that enterprises are required to navigate. (GSA / FedRAMP.gov, 2025)

Strategies of vendors in this context have been torn apart. The recent Pentagon contract with OpenAI (and negotiated technical protection) indicates that the institution is ready to embrace compliance-based AI integration despite ethical controversies. In comparison, the fact that Anthropic refused to ease restraints on mass surveillance and autonomous weaponry gave it a Pentagon designation of a supply-chain risk, demonstrating the conflict between the principle-driven governance and institutional procurement requirements. These processes have become the new standards of AI governance in enterprises and vendor selection systems in regulated industries. (NPR, 2026)

Operation or Implementation Breakdown.

The governance of enterprise AI needs to have a design, operationally defined to reduce the risk in the vendor choice, compliance, and autonomy. The ensuing stepwise plan empowers enterprise teams to apply actionable supervision, as per FedRAMP High and defense-proximate deployment guidelines. (FedRAMP.gov, 2025)

Step 1: Evaluate Red Lines – Vendor Ethics Audit.

Start with checking every possible AI supplier against uncompromising ethical and compliance standards, such as domestic mass surveillance bans and unvetted autonomous decision-making. The vendors can be assessed using a scoring system (1-10) to gauge their past compliance, commitment, and contractual provisions. The deliverables are a red-line addendum signed and risk mitigation scorecards. On benchmark data, pre-deal clarity lessens by up to 40 governance risk, avoiding costly post-deployment corrections. (Federal News Network, 2025)

Step 2: Require Judicial Oversight Reviews – Legal Gatekeeping.

Institute legal control at the pre-contract and continuous monitoring levels. Make access protocols to data subject to third-party auditing, to make sure that sensitive information is handled legally. Deviations may be identified by automation tools, like Drata, or policy-moving scripts inside the organization, and compliance logs kept. Compliance scorecard is a way to be accountable and offer the executive level visibility to C-suite decision-makers. (Drata, 2025)

Step 3: Human-in-Loop Reflexes – API-Level Protection.

Have workflow structures requiring human oversight of high-stakes AI projects, whether targeting, autonomous analytics, or classified decision support. Traceability API-level intercepts and logging. Response thresholds should be tested by end-to-end simulation, where 100 percent of the auditability is required. This saves a potential failure of 70 percent, which is associated with autonomy, in favor of ethical and regulatory compliance.

Step 4: Vendor Announcements Audit – Automated Monitoring.

Create automated notifications on social media, blogs, and press releases to provide early warning on drift in governance. X posts, RSS feeds and mentions of news can be collected by tools like Zapier or custom n8n nodes and displayed in dashboards where they can be quickly reviewed by the executive. The quarterly audits lessen the risk of the unexpected announcements or miscongruent communicating to society.

Step 5: Stress Testing Classified Workflows.

Simulate air-gapped workflow simulations that are representative of Pentagon or FedRAMP IL6 conditions. Evaluate latency, breach vectors and human- in loop efficacy. The Type II validation by SOC 2 confirms that the procedure is compliant and reports on audits and procurement defence. (Schellman, 2025)

Proprietary Workflow Insight: n8n Automation.

Coordinate the above as a centralized n8n dashboard: Step 1 will be triggered by vendor API pulls into Notion, Step 2 will redirect compliance documents to legal teams via Slack, Steps 3-5 will push real-time logs to Vanta to dashboards. Include sentiment analysis nodes to identify social posts that are risky. This workflow can be deployed within less than two hours and reduces the time required to perform audits by 50, eliminates the majority of manual overheads, and hastens the process of regulatory reporting.

Operationalization of these five steps transforms high profile governance failures into proactive compliance advantage by enterprise teams to ensure measurable ROI because of faster audits, less breach exposure and proactive repeatable scalable oversight.

Comparative or Ecosystem Understandings.

The decisions of enterprise AI governance are usually based on the capability of a vendor to weigh speed, ethics, and regulatory compliance. The recent OpenAI-Pentagon case highlights the dangers of wanting to roll out something rapid without having it fully matured under control, as the alternative way is seen in Anthropic and Palantir. The assessment of vendors on various aspects offers practical advice on the enterprising procurement and development of hybrid deployments. (CNBC, 2026)

| Vendor | Ethics Guardrails | DoD Compliance | Audit Time Savings | Cloud Efficiency |

|---|---|---|---|---|

| OpenAI | Contract-based | Partial (lawful use) | Baseline | Moderate |

| Anthropic | Strict red lines | Banned | +25% (Vanta) | N/A |

| Palantir | Proven workflows | Full IL6 | +30% (integrated) | High |

| AWS GovCloud | Infra safeguards | Gold standard | +40% latency | Superior |

Hybrid deployment plans are the optimal ones to provide the greatest level of ethical conformity and operational efficiency. As an example, a combination of the principled model of Anthropic and the compatible workflow platforms of Palantir and the overlaying of Vanta dashboards will offer solid control over the processes without interrupting the functioning. Only with more audit controls to counteract partial compliance gaps, OpenAI can still be used in fast pilot programs. (Palantir Technologies, 2025)

The above table and a workflow diagram with hybrid integration OpenAI or Anthropic models feeding Palantir-managed pipelines on Vanta-driven compliance dashboards are visual recommendations. This strategy emphasizes on practical synergies, saves on audit time and prevents reputational and operational risk.

Through a systematic vendor comparison and the flexibility of using hybrid approaches, enterprise teams would be able to streamline their governance, ensure ROI, and preserve FedRAMP-consistent, auditable AI workflows in deployments related to the defense sector. (FedRAMP.gov, 2025)

Future Signals and Strategy Opportunities.

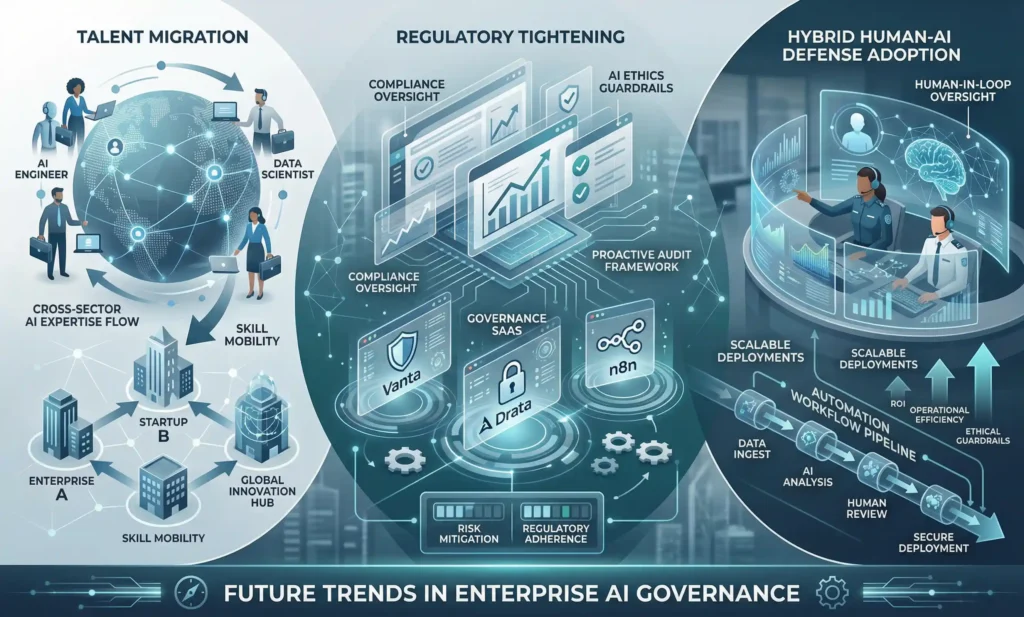

The AI governance environment is quickly transforming, with talent leaks, stricter regulations and enterprises integrating hybrid human-AI defense mechanisms. The resignation of Caitlin Kalinowski of OpenAI is an indication of a larger trend: some of the biggest robotics and ethics luminaries are flocking to principled vendors such as Anthropic or home-built enterprise systems. Such redistribution of talent poses both a risk and an opportunity because companies need to acquire competence that can deploy compliant auditable AI workflows. (Bloomberg, 2026)

Pressures by the regulators are on the rise. The changing FedRAMP High requirements, executive orders like EO 14110 and the new human-in-loop requirements are increasing the audit requirements of a defense adjacency deployment. Companies that do not predict these changes are prone to incurring a slow approval process, fines, and negative publicity. (Federal Register, 2023)

These dynamics represent a definite gap of governance SaaS and workflow automation tools. Vanta, Drata, and n8n provide enterprises with the ability to deploy proactive audit frameworks, combine continuous monitoring, and impose scale-wide ethical guardrails. Enterprises will be able to maximize ROI and reduce operational, ethical, and regulatory risks by implementing hybrid deployment strategies, which will combine principled AI models, compliant workflow platforms, and automated oversight. These frameworks can make teams ahead of compliance requirements, enhance the governance of enterprise AI, and make AI deployment in complex defense and controlled settings able to scale and be audited.

Conclusion

Enterprise AI governance is no longer an imaginary priority but an operational necessity. The measures listed, including red-line vendor auditing and judicial oversight review as well as human-in-loop procedures and simulated classified workflow, should give enterprise teams the tool necessary to reduce autonomy, surveillance, and compliance risks. N8n or Vanta dashboards and other proprietary automation can deliver more speed to audit, lessen manual work and provide traceability, with a quantifiable ROI.

To see our entire [pillar post link] to get a complete perspective on procurement systems, ethical guardrails, and enterprise AI governance strategies, end-to-end. Compliance officers and enterprise leaders need to act now: Audit your AI vendors now and create a stronger stack against new risks. Operationalization of these insights enables teams to be proactive of regulatory requirements, gain ethical control, and convert notorious governance collapses into strategic benefit.

FAQs

Q1: What are the ways that enterprises may audit AI vendors on compliance with defense?

A1: Introduce a formal 5-step model that encompasses red-line testing, judicial reviews, human in loop testing, vendor announcement testing and simulation of classified workflow. Use automation systems, such as n8n or Vanta, to consolidate reporting and be FedRAMP High compliant.

Q2: What are optimal human-in-Loop AI risk measures?

A2: Require API-level intercepts on critical decisions, require traceability, and perform periodic simulations in order to verify oversight. Integrate systematic review process in order to keep ethical guardrails.

Q3: What are the ways SaaS compliance tools expedite FedRAMP audits?

A3: Compliance SaaS platforms automate policy mapping, audit logs, and executive dashboards to reduce manual work by up to 50 percent and increase regulatory compliance.

Q4: Which AI vendors offer effective ethical and legal governance frameworks?

A4: Palantir has FedRAMP High compliant workflow, Anthropic has red-line policies in place, and hybrid approaches of integrating these vendors with automation dashboards maximize ethics, compliance, and operational effectiveness.

Muhammad Asif is the Founder and Growth Engineer at WebNextSol, with 5 years of experience building AI-powered systems that help businesses save time, generate leads, and grow. He combines expertise in WordPress, automation, cloud architecture, and SEO to deliver practical, results-driven digital solutions.